GPT-5.4 vs Claude Opus 4.6 vs Gemini 3.1 Pro: Which Flagship AI Model Wins in 2026?

Last updated: March 6, 2026 · Pricing verified as of March 2026

Claude Opus 4.6 leads coding quality across current vendor-reported results, Gemini 3.1 Pro delivers 1M context at $2/1M input (source: ai.google.dev pricing), and GPT-5.4 is now listed on OpenRouter at $2.50/$20 with 1M context and 128K max output. If you need to pick a model today, Gemini 3.1 Pro is still the best value for most workloads, Opus 4.6 is still strongest for complex coding and agent tasks, and GPT-5.4 should be evaluated in parallel through routing because public benchmark coverage is still limited.

Here's the full breakdown.

TL;DR

- Gemini 3.1 Pro is the price-performance king: $2.00/$12.00 per 1M tokens with 1M context and 80.6% SWE-bench. Hard to beat for most production workloads.

- Claude Opus 4.6 wins on coding quality: 80.8% SWE-bench (single-attempt table) and 81.42% with prompt modification, 64K max output, Agent Teams for multi-agent orchestration, and a full 1M context window at standard Anthropic pricing.

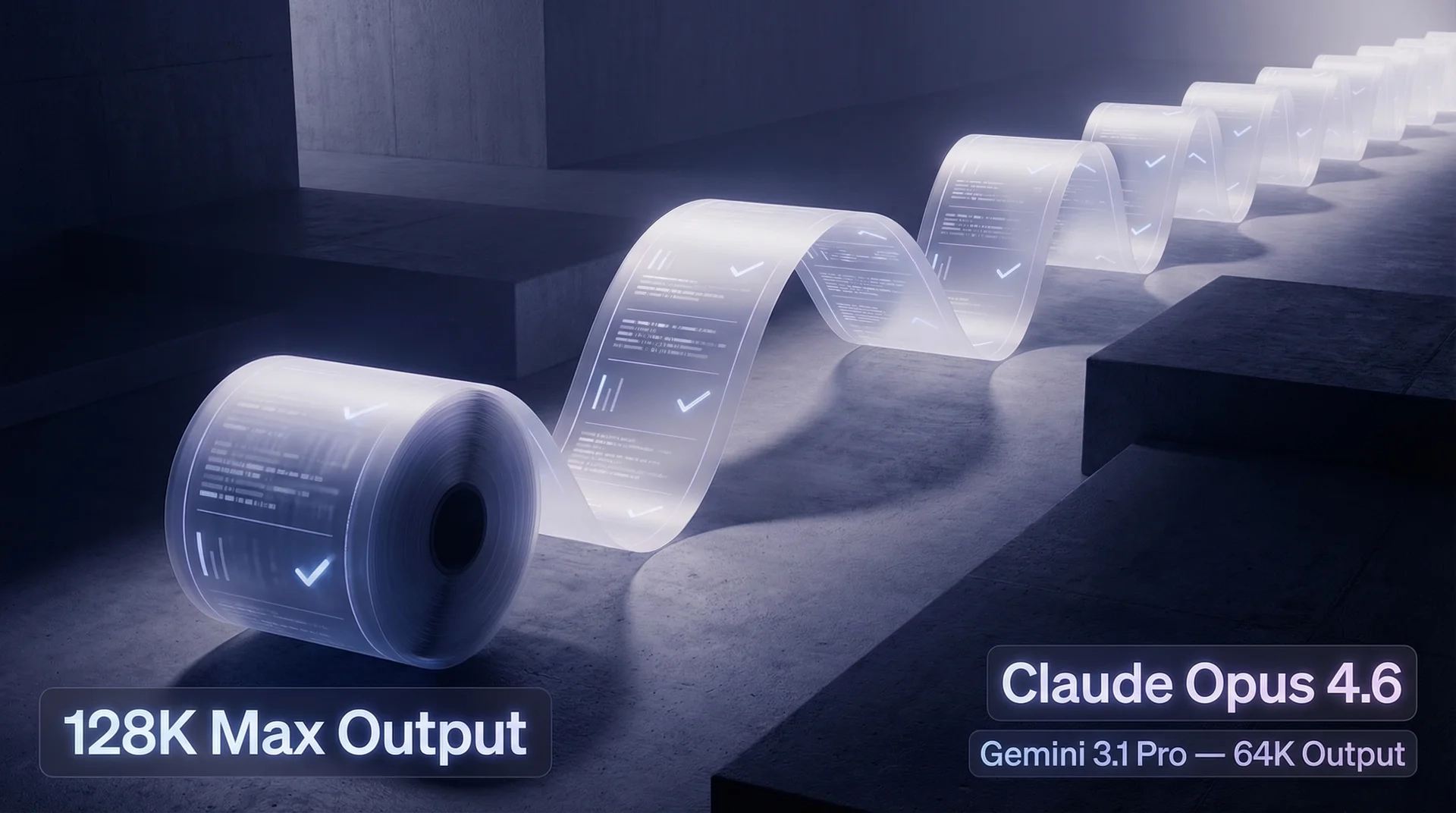

- GPT-5.4 is now listed on OpenRouter: $2.50/$20 per 1M tokens, $0.625 cached input, 1M context, 128K max output. Independent benchmark coverage is still limited.

- For budget-sensitive teams: GPT-5.2 at $1.75/$14 per 1M tokens with 400K context and 80.0% SWE-bench is still a strong contender.

- Don't block delivery: Ship with Gemini 3.1 Pro or Claude Opus 4.6 now, and run GPT-5.4 side-by-side in your eval suite.

Quick Comparison Table

Every cell traced to a primary source. Pricing as of March 2026.

| Claude Opus 4.6 | Gemini 3.1 Pro | GPT-5.4 (OpenRouter) | GPT-5.2 | |

|---|---|---|---|---|

| Provider | Anthropic | Google DeepMind | OpenAI | OpenAI |

| Status | ✅ Available | ✅ Available | ✅ Available via OpenRouter | ✅ Available |

| Context | 1M | 1M | 1M | 400K |

| Max output | 64K tokens | 64K tokens | 128K tokens | 128K tokens |

| Input (/1M) | $5.00 | $2.00 (≤200K) / $4.00 (>200K) | $2.50 (cached input: $0.625) | $1.75 |

| Output (/1M) | $25.00 | $12.00 (≤200K) / $18.00 (>200K) | $20.00 | $14.00 |

| Reasoning | Extended thinking | Standard | Public mode naming still limited | Standard + deep thinking |

| SWE-bench | 80.8% (single) / 81.42% (prompt mod.) | 80.6% (single) | No widely accepted public number yet | 80.0% |

| Best for | Complex coding, agent orchestration | Long-context, multimodal, value | Early GPT-5.4 adoption and internal evals | Budget coding, general |

Sources: anthropic.com/pricing · anthropic.com/docs/models/claude-opus-4-6 · ai.google.dev pricing · deepmind.google model card · platform.openai.com/docs/models/gpt-5.2 · openrouter.ai/openai/gpt-5.4

When to Use Each Model

Pick Claude Opus 4.6 if you need the best code quality

In DeepMind's comparison table, Opus 4.6 is listed at 80.8% SWE-bench (single attempt). Anthropic separately reports up to 81.42% with prompt modification and notes 25-trial averaging in methodology (source: anthropic.com/news/claude-opus-4-6). The 128K max output is best-in-class — you can generate entire file diffs, full test suites, or multi-file refactors in a single response without truncation.

The Agent Teams feature is genuinely useful if you're building multi-agent systems. Opus can coordinate sub-agents, delegate tasks, and maintain context across orchestrated workflows.

The trade-off is still cost. Claude Opus 4.6 now includes the full 1M context window at standard Anthropic pricing, but it remains materially more expensive than Gemini 3.1 Pro at $5/$25 per 1M tokens versus Gemini's $2/$12 base tier.

Best scenarios: SWE-bench-style code repair, multi-agent pipelines, extended code generation (>64K output), safety-critical applications.

Pick Gemini 3.1 Pro if you want the best bang for your buck

Gemini 3.1 Pro offers a rare combination: 1M native context and genuinely competitive benchmarks at the lowest price point of any frontier model. At $2.00/$12.00 per 1M tokens (≤200K context), it's less than half the cost of Opus 4.6 while trailing by only 0.2 percentage points on SWE-bench.

Where Gemini really shines beyond coding:

- GPQA Diamond: 94.3% — PhD-level science reasoning (highest of all models)

- ARC-AGI-2: 77.1% — novel problem-solving

- HLE: 44.4% — high-level exam performance

- Terminal-Bench 2.0: 68.5% — terminal-based coding tasks

- Native multimodal: text + image + audio + video input in a single model

The 64K max output is the main limitation compared to models that support longer single-response generation.

Best scenarios: Long document analysis (legal, medical), multimodal applications (video/audio processing), cost-sensitive production APIs, codebases that fit in 1M context.

Use GPT-5.4 now if... you want early production signals

Current public listing data (OpenRouter):

- 1M token context window

- 128K max output

- $2.50 / 1M input, $0.625 / 1M cached input, $20.00 / 1M output

What is still missing: broad, independent benchmark coverage across real production workloads.

Pragmatic approach: keep Gemini/Opus in production paths, route a controlled share of traffic to GPT-5.4, and promote only after your own evals pass.

Deep Dive: Context Window

| Model | Context Window | Notes |

|---|---|---|

| Gemini 3.1 Pro | 1M tokens | Production-ready 1M context |

| GPT-5.4 | 1M tokens | Listed on OpenRouter |

| GPT-5.2 | 400K tokens | Available now |

| Claude Opus 4.6 | 1M tokens | Full 1M context window at standard Anthropic pricing |

For teams working with large codebases, legal documents, or research corpora, Gemini 3.1 Pro and Claude Opus 4.6 now both reach 1M context. The more meaningful trade-off is cost, modality, and workflow fit rather than a hard context-window gap.

Deep Dive: Reasoning Capabilities

| Model | Reasoning Mode | Key Strength |

|---|---|---|

| Claude Opus 4.6 | Extended thinking | Multi-step debugging, architectural planning |

| Gemini 3.1 Pro | Standard (with thinking support) | GPQA Diamond 94.3%, ARC-AGI-2 77.1% |

| GPT-5.4 | Public mode naming still limited | Validate with your own eval suite |

Claude Opus 4.6's extended thinking is most effective for structured, multi-step reasoning: debugging complex code paths, analyzing system architectures, and working through long chains of dependencies.

Gemini 3.1 Pro's 94.3% GPQA Diamond score is genuinely impressive — this benchmark tests PhD-level science questions, and Gemini leads the field here. For research-oriented workloads, Gemini's reasoning breadth is a real advantage even without a branded "extended thinking" mode.

Deep Dive: Pricing & Cost Analysis

Cost Per Task (Estimated)

Based on typical token usage per task type. Prices at official rates.

| Task | Tokens (in/out) | GPT-5.2 | Gemini 3.1 Pro | Claude Opus 4.6 |

|---|---|---|---|---|

| Simple chat | 1K / 500 | $0.009 | $0.008 | $0.018 |

| Code review (single file) | 5K / 2K | $0.037 | $0.034 | $0.075 |

| Long doc analysis | 100K / 5K | $0.245 | $0.260 | $0.625 |

| Full codebase (200K+ ctx) | 300K / 10K | $0.665 | $1.380* | $1.750 |

*Gemini 3.1 Pro >200K context tier: $4.00/$18.00 per 1M tokens applies.

At high context utilization (>200K tokens), Gemini moves to a higher long-context tier while Claude Opus 4.6 stays on its standard Anthropic rate. That narrows the absolute gap, but Gemini still remains the cheaper route in this example.

Through EvoLink (evolink.ai/models), you can access both Claude Opus 4.6 (from $4.50/1M input, -10%) and Gemini 3.1 Pro at discounted rates via a unified OpenAI-compatible API.

Deep Dive: Coding Performance

| Model | SWE-bench | Conditions | Source |

|---|---|---|---|

| Claude Opus 4.6 | 80.8% (single) / 81.42% (prompt mod.) | Mixed sources | deepmind.google model card / anthropic.com/news/claude-opus-4-6 |

| Gemini 3.1 Pro | 80.6% (single) | Google evaluation | deepmind.google model card |

| GPT-5.2 | 80.0% | OpenAI evaluation | platform.openai.com |

| GPT-5.4 | No widely accepted public number yet | — | Available via OpenRouter |

Important caveat: SWE-bench scores from different vendors use different scaffolds and evaluation setups. The difference between 80.0%, 80.6%, and 80.8% is within the margin where test conditions matter more than model capability. Don't over-index on 0.2% differences.

What actually differentiates these models for coding in practice:

- Opus 4.6: Strong coding quality plus 64K max output for long-form generation and multi-step repair workflows.

- Gemini 3.1 Pro: 1M context lets you feed an entire codebase. Terminal-Bench 2.0 score of 68.5%.

- GPT-5.2: The cheapest option at $1.75/1M input with 80.0% SWE-bench. Good enough for most code review and generation tasks.

Decision Framework

Use this based on your primary constraint:

What About GPT-5.4? Should You Switch Now?

Short answer: don't hard-switch immediately; run a controlled rollout.

GPT-5.4 is now available through OpenRouter listing, but you should still validate quality, latency, and cost on your own workloads before full migration.

The pragmatic approach:

- Start building now with Gemini 3.1 Pro (best value) or Claude Opus 4.6 (best coding)

- Use an API gateway like EvoLink so you can switch models with a config change

- Evaluate GPT-5.4 immediately in your own benchmark suite

- Migrate if it wins — switching cost through a unified API is near zero

Also worth watching: DeepSeek V4 is in early access and could shake up the budget tier.

FAQ

Is GPT-5.4 better than Claude Opus 4.6?

It depends on your tasks, and there is still no broad independent benchmark consensus for GPT-5.4 yet. Claude Opus 4.6 is listed at 80.8% single-attempt in the DeepMind comparison table, and Anthropic reports up to 81.42% with prompt modification. Treat GPT-5.4 as a strong candidate to test, not an automatic replacement.

Which is cheaper: Claude Opus 4.6 or Gemini 3.1 Pro?

Gemini 3.1 Pro is still cheaper. At base rates, Gemini is $2.00/$12.00 versus Opus at $5.00/$25.00. On requests above 200K input tokens, Gemini's long-context tier rises to $4.00/$18.00 while Opus 4.6 stays on its standard Anthropic rate, which narrows the gap but does not erase it.

What is the context window of Gemini 3.1 Pro?

Gemini 3.1 Pro supports 1M tokens of context natively in production. This is the largest production context window among currently shipping frontier models.

Is GPT-5.4 available now?

GPT-5.4 is currently listed on OpenRouter with published token pricing and limits. Availability and billing details can still differ by provider and contract tier.

Can I use Claude Opus 4.6 with 1M context?

Yes. Anthropic's current pricing documentation states that Claude Opus 4.6 includes the full 1M token context window at standard pricing.

Which model is best for coding?

On the single-attempt comparison table, Claude Opus 4.6 is 80.8%, followed by Gemini 3.1 Pro at 80.6% and GPT-5.2 at 80.0%. Anthropic also reports 81.42% for Opus with prompt modification. The differences are small — choose based on your budget and context window needs.

Is Gemini 3.1 Pro good for multimodal tasks?

Yes. Gemini 3.1 Pro is the only model in this comparison with native multimodal input supporting text, image, audio, and video in a single model. Claude and GPT support image input, but neither handles audio or video natively at the API level.

This page is updated as new information becomes available. Last checked: April 9, 2026.

Explore These Models on EvoLink

- Gemini 3.1 Pro API — EvoLink Gemini reasoning route at $2/$12 per 1M tokens

- Gemini 3 Flash Preview API — Fast multimodal at $0.50/$3 per 1M tokens

- Gemini 3.1 Pro CustomTools — Dedicated route for tool-heavy agent workflows

- Gemini API Family — Compare Gemini routes by price, context, and workload fit

- Claude Opus 4.6 API — Anthropic's flagship for complex coding and agent orchestration

- Claude Sonnet 4.6 API — Best balance of speed, intelligence, and cost

- Claude Opus 4.6 vs Gemini 3.1 Pro — Head-to-head pricing and benchmark comparison

- Gemini 3.1 Pro vs GPT-5.2 vs Claude Opus — Three-way comparison with benchmark tables

Want to use GPT-5.4 now with model routing? Create a free EvoLink account (evolink.ai) and switch models through one unified API.