GPT-5.4 Release Date (2026): Latest News, Leaked Features & Developer Guide

If you're tracking GPT-5.4, you're probably trying to answer one question: should you wait for it, or build with what's available now?

This page separates confirmed signals, credible reporting, and speculation so you can make that decision quickly.

openai/gpt-5.4) with posted pricing ($2.50 / 1M input, $0.625 / 1M cached input, $20.00 / 1M output), 1M context, and 128K max output. OpenAI direct billing tiers and enterprise contract pricing can still differ by channel.Timeline So Far

Here are the most credible signals in chronological order:

- February 27, 2026: Codex PR #13050 added original-resolution image support, with the minimum model version initially set to GPT-5.4. After seven force pushes within five hours, the threshold was changed to GPT-5.3-Codex. The PR was merged on March 3. (Source: GitHub PR #13050)

- March 2, 2026: Codex PR #13212 added a

/fastslash command, originally described as "toggle Fast mode for GPT-5.4." The reference was scrubbed within three hours. (Source: Awesome Agents) - March 2, 2026: Separately, OpenAI Codex team member Tibo accidentally posted a screenshot on X showing GPT-5.4 as a selectable model in the Codex app alongside GPT-5.3-Codex. The post was quickly deleted. (Source: NxCode, eWeek)

- March 3, 2026: OpenAI posted "5.4 sooner than you think" on X.

- March 3, 2026:

alpha-gpt-5.4briefly appeared in a public API models endpoint before being removed. - March 4, 2026: The Information reported GPT-5.4 may include a context window exceeding 1 million tokens and an "extreme" thinking mode.

- March 4, 2026: PiunikaWeb reported GPT-5.4 activity on LMSYS Arena, suggesting internal testing.

- March 5, 2026: OpenRouter listed

openai/gpt-5.4with public token pricing and limits.

Confirmed vs Speculative

| Topic | Can reasonably cite | Still uncertain | Why it matters |

|---|---|---|---|

| Availability | OpenRouter now lists openai/gpt-5.4 (March 5, 2026) | OpenAI direct tier parity and contract-tier differences | Rollout and procurement decisions |

| Context window | OpenRouter listing shows 1M context | Cross-provider parity and practical quality at full length | Long-context architecture decisions |

| Reasoning mode | "Extreme" mode is still mostly report-based | Public mode controls, latency tiers, and defaults | Research and analysis workloads |

| Vision detail | Leak hints at full-resolution options | Actual quality and supported formats | Image analysis pipeline planning |

| Agentic improvements | Multiple hints from code references | Scope of tool-calling/agent upgrades | Migration effort for agent flows |

| Pricing | OpenRouter lists $2.50 in / $20 out (+ cached input) | OpenAI direct and enterprise pricing details | Budget forecasting |

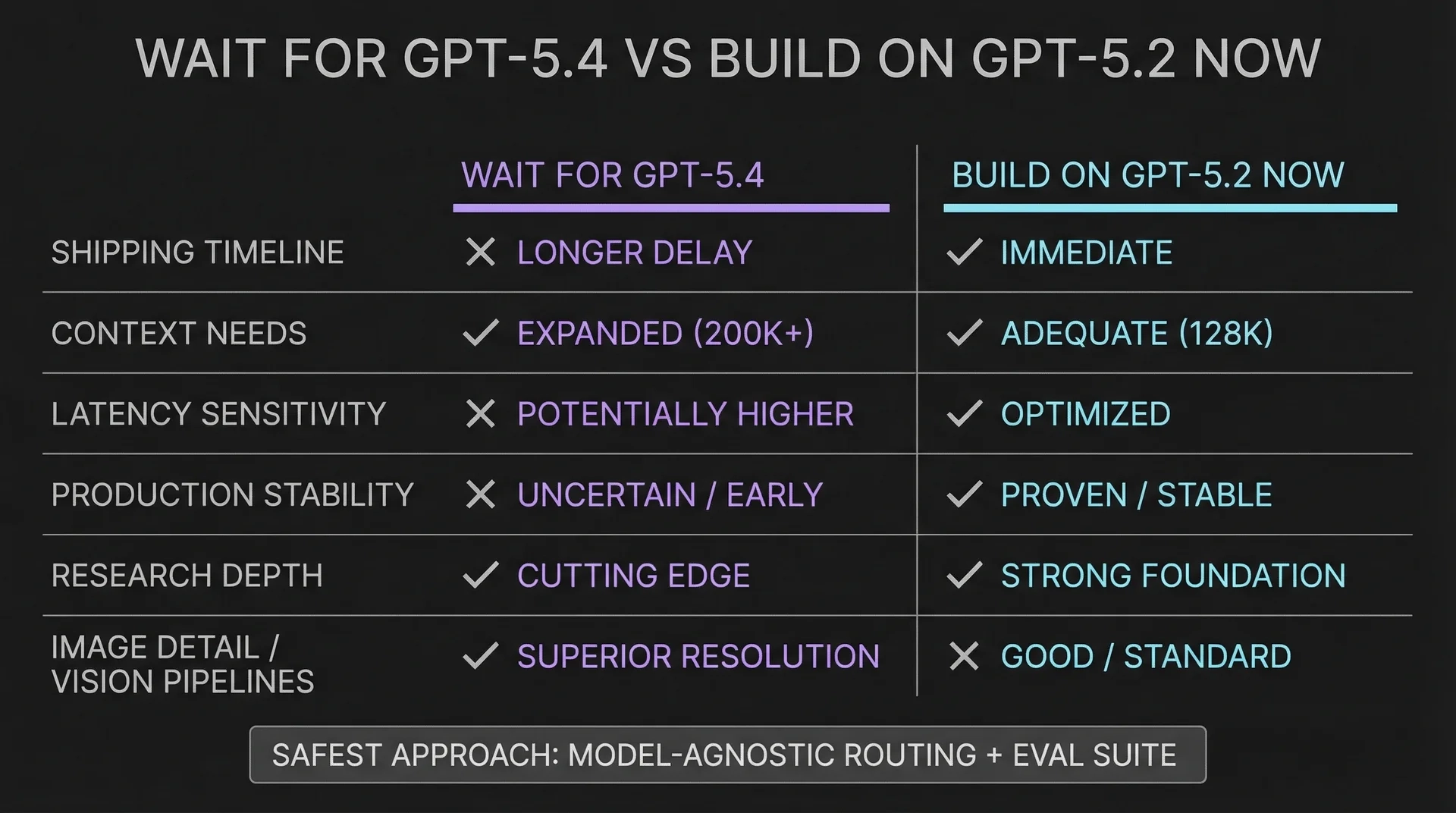

Should You Wait or Build Now?

Build now on GPT-5.2 if:

- Your product ships in the next 2 weeks.

- You do not need more than 400K context.

- You are latency-sensitive.

- You are already in production and only need a model swap later.

Use GPT-5.4 now (in controlled rollout) if:

- Your design depends on 1M context right now.

- Your team can run side-by-side evals on quality, latency, and cost.

- You already have model routing and fallback in place.

- You can accept provider-level variance during early adoption.

Recommended approach: keep GPT-5.2 as baseline, route a limited share to GPT-5.4, then promote only after your eval gates pass.

How to Prepare During Early Rollout

1. Set up model-agnostic routing

Keep one internal inference interface and route models behind it. This turns future migration into a config update.

2. Build an eval suite now

Test against your real failure modes:

- Your hardest real task

- One long-context scenario

- One regression set for simple tasks

- One cost check (tokens and dollars per task)

3. Define success criteria in advance

Pick a few product-level metrics before increasing GPT-5.4 traffic:

- Task completion quality

- P95 latency

- Cost per task

- Hallucination rate for your domain

EvoLink Integration Plan

With GPT-5.4 now publicly listed via OpenRouter, EvoLink integration planning should prioritize baseline checks:

- Availability and stability under load

- Latency baseline (P50/P95)

- Error handling behavior

- Quality gate against current GPT-5.2 evals

$1.40/1M input and $11.20/1M output. GPT-5.4 EvoLink final pricing should be confirmed on EvoLink pricing pages at rollout time.GPT-5 Family Snapshot

| Model | Date | Context | Positioning | EvoLink price |

|---|---|---|---|---|

| GPT-5.3 Instant | March 3, 2026 | 128K (API alias: gpt-5.3-chat-latest) | Everyday tasks | N/A |

| GPT-5.2 Thinking | December 11, 2025 | 400K | Deeper reasoning | $1.40/1M input |

| GPT-5.2-Codex | December 18, 2025 (OpenAI release) / January 14, 2026 (Copilot GA) | 400K | Agentic coding | $1.40/1M input |

| GPT-5.1 | November 2025 | 400K | General-purpose | $1.00/1M input |

| GPT-5.4 | March 2026 (listed on OpenRouter) | 1M (OpenRouter listing) | Flagship upgrade | TBD on EvoLink |

FAQ

When will GPT-5.4 be released?

GPT-5.4 is already listed on OpenRouter as of March 5, 2026. OpenAI direct-channel rollout details can still vary by tier.

Is GPT-5.4 available in OpenAI API right now?

openai/gpt-5.4). Direct OpenAI API availability and pricing details may differ by account tier and contract.Will GPT-5.4 be more expensive than GPT-5.2?

On current OpenRouter listing, yes: GPT-5.4 is priced above GPT-5.2. Validate your effective cost with your own prompt mix and cache hit rate.

Will GPT-5.4 replace GPT-5.2 immediately?

Probably not. Major releases usually overlap with prior models for a transition period.

Will existing OpenAI-compatible code keep working?

Most likely yes. In most integrations, migration should primarily be a model-name change plus evaluation checks.

What is the safest GPT-5.2 to GPT-5.4 migration path for production?

Use model-agnostic routing, keep feature flags per model, run domain evals, then do staged rollout by traffic percentage.

Will gpt-5.3-chat-latest automatically become GPT-5.4?

Do not assume that. Treat aliases as separate products and pin explicit model IDs in production.

Does GPT-5.4 help long-context RAG quality or only increase token limit?

Higher context can help only if retrieval quality, chunking strategy, and evaluation coverage are already strong.

Should startups ship with GPT-5.2 now or wait for GPT-5.4 in March 2026?

If launch is near-term, ship on GPT-5.2 and prepare a fast model-switch path. Waiting is mainly justified for hard 1M+ context requirements.

How will GPT-5.4 compare with Gemini 3.1 Pro and Claude Opus 4.6?

A fair comparison requires production access and side-by-side tests on identical tasks.