Best OpenRouter Alternatives in 2026: Verified Routing Options for Production Teams

If you are looking for an OpenRouter alternative, you are usually not asking for "another API endpoint."

You are asking for one of these:

- more control over routing logic

- stronger privacy or deployment control

- better observability in production

- clearer pricing for routing itself

- a better fit for your workload than a broad hosted catalog

TL;DR

- Use OpenRouter if you want the broadest hosted catalog and a simple

openrouter/autoexperience. - Use evolink Smart router if you want a unified gateway across chat, image, and video with gateway-side routing.

- Use Portkey if you want routing plus production controls such as retries, configs, logs, and enterprise privacy options.

- Use LiteLLM if self-hosting and infrastructure ownership matter more than managed convenience.

- Use Not Diamond if you want a routing optimization layer rather than another gateway.

- Use Helicone if observability is the priority and routing is secondary.

- Use Azure AI Foundry model router if your stack already lives inside Azure.

What changed from the original draft

The earlier draft mixed verified platform facts with unsupported operational claims and overly broad conclusions. This version does three things differently:

- It uses official docs and pricing pages for the main comparison.

- It removes community-report-style failure anecdotes from the core recommendation.

- It uses the correct EvoLink product naming: evolink Smart router, not "EvoLink Auto."

Verified comparison table

| Platform | Routing approach | Deployment / privacy posture | Pricing visibility | Best fit |

|---|---|---|---|---|

| OpenRouter | Hosted openrouter/auto, powered by Not Diamond; provider routing and fallbacks are configurable | Hosted gateway; official docs support ZDR controls and provider-level data-policy filtering | Clear on model pages; official docs say Auto Router has no extra fee | Teams that want broad model access with minimal setup |

| evolink Smart router | Smart routing inside the EvoLink unified API workflow | Hosted unified gateway for chat, image, and video; OpenAI-compatible integration pattern is documented in this repo | Official site says pay-as-you-go with a small routing fee and claims 20-70% savings depending on route | Teams that want one API surface across modalities and lower integration overhead |

| Portkey | Config-driven routing, retries, fallbacks, load balancing, caching | Hosted control plane with privacy mode and enterprise private-cloud options; also open-source gateway | Public plans start with Free and $49/month Production | Teams that need routing plus observability and operational controls |

| LiteLLM | Self-managed router with load balancing, cooldowns, retries, and fallbacks | Self-hosted or self-operated proxy; strongest when infra control matters | OSS core, but infra cost is yours; enterprise pricing is separate | Teams that want maximum control and accept DevOps overhead |

| Not Diamond | Routing recommendation and optimization layer, not a gateway | Works with your existing stack; official site lists SOC-2, ISO 27001, ZDR, and VPC options | Public pay-as-you-go routing recommendations plus custom enterprise plans | Teams optimizing model choice across their own stack |

| Helicone | Observability-first gateway with caching and automatic fallbacks | Hosted, with higher plans listing HIPAA, SOC-2 Type II, and on-prem options | Public plan structure with free tier and usage-based pricing | Teams that care most about monitoring, debugging, and usage analytics |

| AIRouter | Dynamic routing with quality, cost, and speed weighting | Offers model-selection and private-selection modes to keep content out of the router path | Public pricing from free to paid monthly plans | Teams that want router-first optimization with privacy-preserving modes |

| Azure AI Foundry model router | Azure-deployed model-router with routing modes and custom subsets | Runs inside your Foundry resource; strongest fit for Azure governance and tenant alignment | Azure billing depends on your deployment and selected models; verify current regional pricing separately | Azure-native teams that want routing without another external gateway |

Where each alternative is strongest

OpenRouter

openrouter/auto is powered by Not Diamond and billed at the selected model's normal rate with no extra auto-router fee.Use it when:

- you want the largest hosted catalog

- you do not want to self-host a routing layer

- you want provider routing, fallbacks, and ZDR controls in one hosted product

If you want tighter deployment control than a hosted router can give you, look elsewhere.

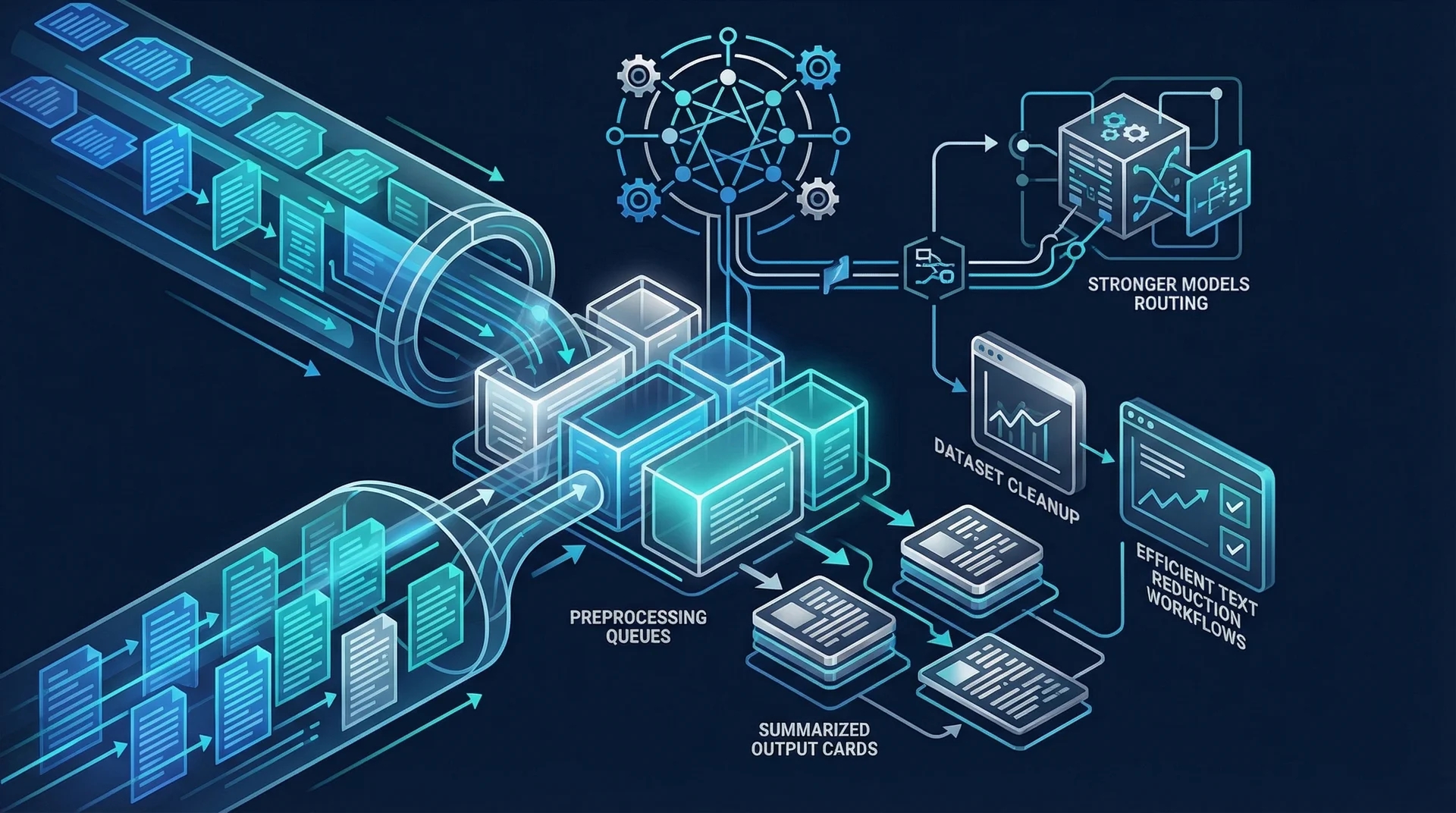

evolink Smart router

evolink Smart router is the correct EvoLink routing product name in this repo's current blog and integration materials. The fit is different from OpenRouter:- EvoLink publicly positions itself as one API across chat, image, and video

- the official site says routing can reduce cost depending on available provider paths

- the repo's own quickstart content confirms an OpenAI-compatible request shape and base URL workflow

This is the right option when your goal is not just "route among text models," but to keep a single API surface as your product expands across modalities.

Portkey

Portkey is strongest when routing is only one part of the problem. Its official docs and pricing pages make the positioning clear:

- routing configs

- retries

- fallbacks

- load balancing

- logs and traces

- privacy modes and enterprise hosting options

If your team needs operational tooling around AI traffic, not just model selection, Portkey is usually a better comparison target than pure router products.

LiteLLM

- load balancing across deployments

- cooldown logic

- fallbacks

- retries with exponential backoff

That makes it attractive for internal platforms, regulated environments, or teams that already operate Redis, gateways, and deployment automation. The tradeoff is obvious: you also own the operational complexity.

Not Diamond

Not Diamond should not be treated as a direct "gateway replacement" in the same way as OpenRouter or Portkey. Its own pricing page describes it as a routing and optimization layer that can sit on top of your existing stack.

That distinction matters:

- if you want a hosted API gateway, Not Diamond is not the closest replacement

- if you want a smarter model-selection layer on top of your current gateway or provider setup, it is one of the most direct options

Helicone

- caching

- automatic fallbacks

- request storage and retention controls

- compliance features on higher tiers

Choose it when debugging, analytics, and usage visibility are your main bottlenecks.

AIRouter

AIRouter is the most explicitly router-first alternative in this list outside Not Diamond. Its official site emphasizes:

- routing by quality, cost, and speed preferences

- private selection mode using anonymized patterns

- a separate model-selection mode where you keep the model call on your side

That makes it especially relevant for teams that want routing help without fully giving up control of their data path.

Azure AI Foundry model router

model-router is the most ecosystem-specific option here. The official Azure docs show that you deploy it inside Foundry, pick a routing mode, optionally route to a custom subset of models, and then call it through the chat completions API like a normal deployed model.This is the best fit when:

- your policies already live in Azure

- your AI stack already runs in Foundry

- you want routing without adding another vendor into the critical path

It is a weaker fit if you want cross-cloud or cross-vendor independence.

Scenario guide

| If your main goal is... | Start with | Why |

|---|---|---|

| Broad hosted model access | OpenRouter | Biggest hosted catalog and low-friction setup |

| Unified API across chat, image, and video | evolink Smart router | Better fit when your routing needs span multiple modalities |

| Enterprise controls, logs, and routing policies | Portkey | Operational surface is stronger than router-only products |

| Self-hosted routing and infra ownership | LiteLLM | Most direct self-managed alternative |

| Smarter model recommendation on top of your own stack | Not Diamond | Optimization layer rather than gateway replacement |

| Observability and debugging | Helicone | Monitoring-first with gateway helpers |

| Privacy-preserving routing assistance | AIRouter | Selection and private-selection modes are core to the product |

| Azure-native routing | Azure AI Foundry model router | Best alignment with Azure governance and deployment patterns |

What to verify before you switch

Do not choose a router based on the homepage headline alone. Verify these four things with your own traffic:

1. Data handling

Check whether the platform:

- stores prompts by default

- supports ZDR or privacy-mode controls

- can run in your environment or private cloud

2. Routing control

Check whether you can:

- restrict the model pool

- set fallbacks

- prioritize latency vs cost vs quality

- inspect which underlying model actually handled the request

3. Operational fit

Check whether you need:

- logs and traces

- rate-limit handling

- retries and backoff

- self-hosting

- enterprise compliance paperwork

4. Real pricing

There is no such thing as "cheap routing" in the abstract. Compare:

- routing fees

- request or seat fees

- log retention costs

- inference passthrough costs

- your own infra bill if you self-host

Platforms intentionally left out of the main table

Final take

OpenRouter is still a strong default if you want a broad hosted catalog and a quick path to auto-routing.

But "best alternative" depends on what you are actually replacing:

- replacing broad hosted access: choose another hosted gateway

- replacing missing controls: choose Portkey or LiteLLM

- replacing weak deployment fit: choose Azure AI Foundry or LiteLLM

- replacing one-model-per-integration sprawl across modalities: choose evolink Smart router

That is the more useful frame for production teams than declaring a universal winner.

FAQ

Is OpenRouter still a good default in 2026?

Yes. It is still one of the simplest hosted ways to access a large model catalog through one API. If your team values breadth and ease of setup over deployment control, it remains a sensible default.

Which OpenRouter alternative is best for self-hosting?

LiteLLM is the clearest self-hosted option in this comparison. Its official routing docs explicitly cover load balancing, fallbacks, retries, and cooldown logic across deployments.

Is evolink Smart router the same thing as OpenRouter Auto?

Is Not Diamond a gateway?

Not in the same sense as OpenRouter, Portkey, or LiteLLM. Based on its own pricing and product pages, Not Diamond is better understood as a routing and optimization layer that works with the rest of your stack.

Which options have public pricing I can inspect before talking to sales?

OpenRouter, Portkey, Helicone, AIRouter, and Not Diamond all publish meaningful pricing information or plan structures publicly. Azure AI Foundry pricing still needs to be checked against your region, models, and current Azure billing setup.

Which option is strongest for enterprise controls?

Portkey and Azure AI Foundry are the strongest enterprise-control options in this list, but they solve different problems. Portkey is better when you want a specialized AI gateway layer. Azure is better when you already standardize on Azure governance and deployment.

When should I choose evolink Smart router over a fixed model?

evolink Smart router when your workload is still evolving, when you want one gateway surface across multiple AI modalities, or when you want routing decisions to stay in the gateway layer. Choose a fixed model when you already know the exact quality, latency, and cost profile you want for a stable production path.