omni-moderation-latest Explained: Text and Image Moderation Guide

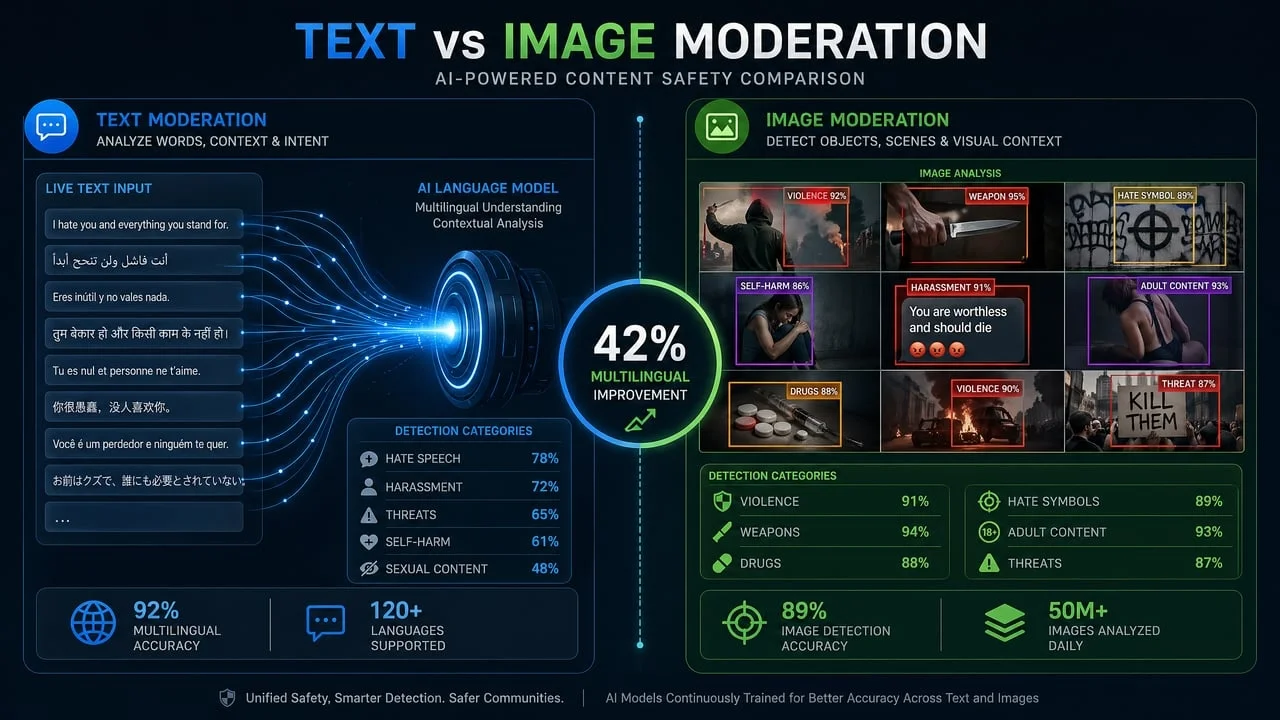

omni-moderation-latest is OpenAI's multimodal moderation model for detecting harmful content in text and images. It matters because it moved OpenAI moderation beyond text-only checks and gave developers a single model family for text and image safety workflows.The short version:

- OpenAI introduced

omni-moderation-lateston September 26, 2024. - It is based on GPT-4o and supports both text and image inputs.

- OpenAI says the model is free to use through the Moderation API.

- Image support is category-specific, so not every moderation category works for image-only inputs.

- Teams that want an OpenAI-compatible moderation endpoint inside EvoLink workflows can also evaluate EvoLink Moderation 1.0.

This guide explains what the model does, how it differs from older text moderation models, and how to think about production implementation.

What is omni-moderation-latest?

omni-moderation-latest is OpenAI's moderation model for identifying potentially harmful content. OpenAI's model page describes it as a free moderation model that accepts text and image inputs and returns text output through the Moderation endpoint.Sources:

The model is not a general-purpose image generator or chat model. It is a classifier. You send user content to the Moderation API, and the response tells you which categories may be present and how strongly the model scored them.

Why OpenAI replaced text-only moderation with multimodal moderation

omni-moderation-latest, many moderation systems treated text and images as separate problems. That created awkward production workflows:- one moderation call for a user comment

- another service for image uploads

- separate category definitions

- separate response formats

- separate thresholds and review rules

OpenAI's September 2024 announcement positioned the new model as a way to evaluate harmful text and images with a more capable multimodal classifier. OpenAI also said the model improved performance especially for non-English content.

The practical result is simple: applications that accept both captions and images can use one moderation model instead of stitching together a text classifier and a separate image safety service.

What inputs does omni-moderation-latest support?

OpenAI's model page lists:

| Modality | Support |

|---|---|

| Text | Input and output |

| Image | Input only |

| Audio | Not supported |

| Video | Not supported |

omni-moderation-latest can evaluate text, images, or text-plus-image requests, but it does not moderate audio or video directly.For teams building user-generated content workflows, this maps well to common cases:

- comments and chat messages

- profile text

- image uploads

- listings with captions and photos

- AI-generated text or generated images before publication

Which categories work for images?

This is the detail many teams miss.

OpenAI's announcement says multimodal harm classification was supported for these image-related categories at launch:

- violence and

violence/graphic - self-harm,

self-harm/intent, andself-harm/instructions - sexual content, but not

sexual/minors

OpenAI also states that the remaining categories were text-only at the time of the announcement, with plans to expand multimodal support.

In practice, that means image moderation is useful, but it is not the same as saying every text moderation category works equally well for images. If your product needs to detect hate symbols in memes, policy-violating text embedded inside images, brand safety issues, spam overlays, or marketplace-specific visual rules, you may still need additional checks.

omni-moderation-latest vs text-moderation-latest

| Area | text-moderation-latest | omni-moderation-latest |

|---|---|---|

| Primary input | Text | Text and images |

| Image moderation | Not the main use case | Supported for selected categories |

| Newer harm categories | More limited | Adds illicit and illicit/violent as text-only categories, according to OpenAI's announcement |

| Multilingual performance | Older baseline | OpenAI reported stronger multilingual performance in its internal evaluation |

| Best fit | Legacy text-only integrations | Newer text and image moderation workflows |

omni-moderation-latest is broader input support and newer category behavior.How to use omni-moderation-latest

A basic text moderation call looks like this:

from openai import OpenAI

client = OpenAI()

response = client.moderations.create(

model="omni-moderation-latest",

input="User-submitted text goes here"

)

result = response.results[0]

if result.flagged:

print(result.categories)

print(result.category_scores)For image moderation, use an image input:

from openai import OpenAI

client = OpenAI()

response = client.moderations.create(

model="omni-moderation-latest",

input=[

{

"type": "image_url",

"image_url": {

"url": "https://example.com/user-upload.jpg"

}

}

]

)

result = response.results[0]

print(result.flagged)

print(result.category_scores)For text-plus-image moderation:

response = client.moderations.create(

model="omni-moderation-latest",

input=[

{"type": "text", "text": "Caption or user message"},

{

"type": "image_url",

"image_url": {

"url": "https://example.com/user-upload.jpg"

}

}

]

)Always test these examples against the current OpenAI API docs before shipping, because SDK request shapes can evolve over time.

Production patterns for moderation workflows

The API call is only one part of the moderation system. In production, the bigger question is what your application does with the result — typically mapping scores into allow, review, or block decisions, tracking false positives, and logging reviewer overrides.

omni-moderation-latest, you build that mapping yourself from category flags and scores. Your application decides which categories are hard blocks, which require review, and which are signals only.When omni-moderation-latest is a good fit

omni-moderation-latest when:- you already use OpenAI directly

- your app needs OpenAI's documented moderation categories

- your workflow is text-first with some image moderation needs

- you are comfortable implementing your own threshold and review logic

- you want a free moderation model inside the OpenAI API ecosystem

For many OpenAI-native products, that is a strong starting point.

When to consider an OpenAI-compatible alternative

omni-moderation-latest leaves to your application code.evolink_summary object with risk_level (low / medium / high), so your application can route content without writing category-score aggregation. It supports text-only, image-only, and text-plus-image inputs.OpenAI vs EvoLink: how to choose

| Choose this | If your priority is... |

|---|---|

OpenAI omni-moderation-latest | Free moderation inside a direct OpenAI API workflow |

| EvoLink Moderation 1.0 | OpenAI-compatible moderation inside EvoLink with text-plus-image support and a simplified risk summary |

| Multi-layer moderation | Custom policy enforcement, brand rules, appeals, human review, or compliance workflows beyond one API |

There is no universal winner. OpenAI's model is a strong fit for OpenAI-native applications. EvoLink is a strong fit when your team wants the moderation layer to sit beside other EvoLink API calls and return a production-oriented risk summary.

FAQ

Is omni-moderation-latest free?

OpenAI describes moderation models as free models, and OpenAI's announcement says the new moderation model is free to use through the Moderation API. Rate limits depend on usage tier.

Does omni-moderation-latest support images?

Yes. OpenAI's model page lists image as an input modality. However, OpenAI's announcement makes clear that image support is category-specific, so not every moderation category applies to image inputs.

Does omni-moderation-latest support video or audio?

No. OpenAI's model page lists audio and video as not supported for this model.

Is EvoLink Moderation the same as omni-moderation-latest?

No. EvoLink Moderation 1.0 is a separate EvoLink moderation service with an OpenAI-compatible API interface. It is designed for teams that want text and image moderation inside EvoLink workflows.

Should I replace OpenAI moderation with EvoLink Moderation?

evolink_summary and risk_level, flat per-call pricing, and integration with other EvoLink APIs.Related moderation guides

- OpenAI Moderation API Pricing: Is It Free? Limits and Alternatives

- Image Moderation API Guide: How to Filter Unsafe User-Uploaded Images

- Best Content Moderation APIs and Tools for Developers

- How to Add Content Moderation to Your Chatbot or AI Agent