Chatbot Content Moderation: Input, Output, and Agent Guardrails

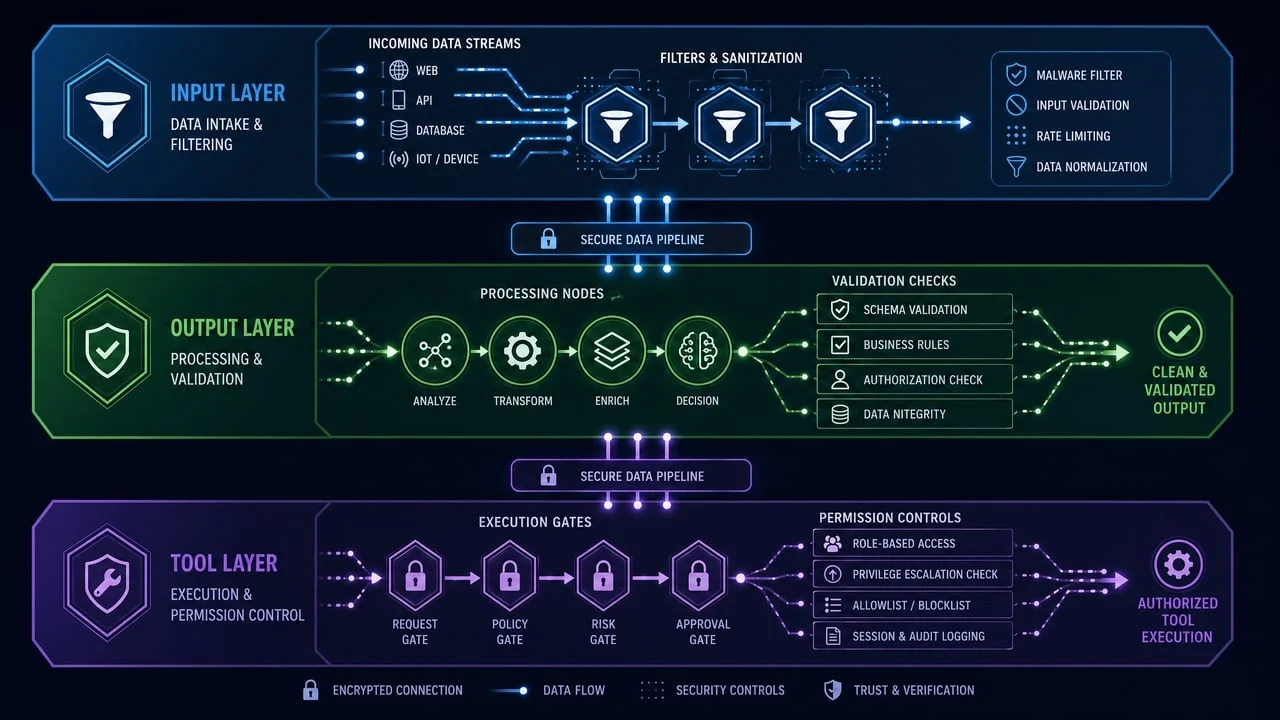

Adding content moderation to a chatbot or AI agent is not just about blocking bad words. A production workflow needs to check user inputs, model outputs, and the actions an agent is allowed to take.

The safest mental model is:

input moderation

-> model or agent reasoning

-> tool-call validation

-> output moderation

-> allow / review / block

TL;DR

- Moderate user inputs before they reach your chatbot or agent.

- Moderate model outputs before users see them.

- Validate tool calls before an agent executes actions.

- Do not treat content moderation as a complete prompt-injection defense.

- Use human review for medium-risk or high-impact cases.

- EvoLink's

evolink_summary.risk_levelcan simplify allow / review / block logic.

Content moderation is not the same as agent security

Content moderation helps identify harmful text or images. It can reduce the risk of unsafe prompts, harmful outputs, and policy-violating user content.

Agent security is broader. It includes prompt injection, tool permissions, data access, human approvals, structured outputs, evals, logging, and workflow design.

OpenAI's agent safety guide recommends combining techniques such as guardrails, tool approvals, trace grading, evals, and structured workflow design. OWASP also treats prompt injection as a distinct LLM security risk that can lead to data leakage, unintended actions, or tool misuse.

Sources:

The takeaway: moderation is one guardrail. It should not be the only guardrail.

Layer 1: input moderation

Input moderation checks the user's message before it reaches your model.

Use it to catch:

- clearly harmful requests

- policy-violating user messages

- abusive or harassing text

- unsafe image uploads

- prompts asking for disallowed content

Input moderation is useful because it prevents obviously unsafe requests from entering the model workflow at all.

from openai import OpenAI

client = OpenAI(

api_key="YOUR_EVOLINK_API_KEY",

base_url="https://direct.evolink.ai/v1"

)

def handle_user_message(user_input: str):

input_check = client.moderations.create(

model="evolink-moderation-1.0",

input=user_input

)

summary = input_check.model_extra["evolink_summary"]

if summary["risk_level"] == "high":

return {

"status": "blocked",

"message": "I can't help with that request."

}

return run_chatbot(user_input)Important limitation: input moderation is not a complete prompt-injection defense. Attackers can use indirect prompts, encoded text, images, external documents, or tool outputs to influence agents later in the workflow.

Layer 2: output moderation

Output moderation checks the model response before it reaches the user.

Use it to catch:

- unsafe generated content

- policy-violating responses

- harmful advice in sensitive domains

- responses that slipped through despite safe-looking input

def safe_chat(user_input: str):

input_check = client.moderations.create(

model="evolink-moderation-1.0",

input=user_input

)

if input_check.model_extra["evolink_summary"]["risk_level"] == "high":

return "I can't help with that request."

answer = llm.generate(user_input)

output_check = client.moderations.create(

model="evolink-moderation-1.0",

input=answer

)

if output_check.model_extra["evolink_summary"]["risk_level"] == "high":

return "I generated a response that needs to be revised. Please try rephrasing."

return answerOutput moderation is especially useful for public chatbots, AI copilots, and generated-content workflows where the model response becomes user-visible.

Layer 3: tool-call validation

For AI agents, tool calls are often the highest-risk layer.

If an agent can send emails, query databases, update tickets, call APIs, or write files, the tool call should be validated before execution. This is not just moderation. It is authorization and policy enforcement.

Validate:

- tool name

- allowed user or account scope

- required human approval

- allowed parameter values

- read vs write operations

- external recipients

- destructive actions

- data exfiltration risk

# Simplified example — production systems should use parameterized queries

# and proper authorization layers, not string matching.

def validate_tool_call(tool_name: str, args: dict, user_context: dict):

if tool_name == "send_email":

if not args["to"].endswith("@company.com"):

return False, "External email requires approval."

if tool_name == "database_query":

query = args.get("query", "")

forbidden = ["DROP ", "DELETE ", "TRUNCATE "]

if any(word in query.upper() for word in forbidden):

return False, "Destructive database operation blocked."

if tool_name == "refund_customer":

if args["amount"] > user_context["approval_limit"]:

return False, "Refund amount requires human approval."

return True, NoneOpenAI's agent safety guide also recommends keeping tool approvals on for MCP tools so end users can review and confirm operations.

Layer 4: allow / review / block routing

Binary safe/unsafe moderation is usually too blunt. A more useful production pattern is:

| Risk | Action |

|---|---|

| Low | allow |

| Medium | queue for review, warn, or limit distribution |

| High | block or require appeal |

evolink_summary object with a risk_level field designed for this kind of routing.def route_by_risk(content: str):

result = client.moderations.create(

model="evolink-moderation-1.0",

input=content

)

summary = result.model_extra["evolink_summary"]

if summary["risk_level"] == "high":

log_block(summary)

return {"action": "block"}

if summary["risk_level"] == "medium":

queue_for_review(content, summary)

return {"action": "review"}

return {"action": "allow"}Full chatbot moderation pattern

Here is a practical end-to-end shape:

def handle_chatbot_turn(user_input: str, user_context: dict):

# 1. Moderate input

input_route = route_by_risk(user_input)

if input_route["action"] == "block":

return "I can't help with that request."

if input_route["action"] == "review":

log_review_event("input", user_input)

# 2. Generate model response and proposed tool calls

agent_result = agent.run(user_input, user_context)

# 3. Validate tool calls before execution

for call in agent_result.tool_calls:

ok, reason = validate_tool_call(call.name, call.args, user_context)

if not ok:

log_security_event(call, reason)

return "That action requires additional review."

# 4. Execute approved tools

tool_outputs = execute_tool_calls(agent_result.tool_calls)

# 5. Generate final response

final_answer = agent.format_response(tool_outputs)

# 6. Moderate output

output_route = route_by_risk(final_answer)

if output_route["action"] == "block":

return "I need to revise that response."

if output_route["action"] == "review":

queue_for_review(final_answer, output_route)

return final_answerThe exact implementation will vary, but the shape is stable: check input, constrain actions, check output, and log decisions.

Handling false positives

False positives are unavoidable. The goal is to reduce harm without blocking legitimate users too often.

Use a review queue for:

- medium-risk content

- unclear context

- policy-sensitive categories

- high-value users or high-impact actions

- appeals

Log each moderation decision with enough context to debug later:

- content hash

- user id or account id

- risk level

- triggered categories

- action taken

- reviewer override

- timestamp

Avoid logging raw sensitive content unless you have a clear retention and privacy policy.

What to promise internally

Do not promise that moderation makes a chatbot "100% safe."

A better promise is:

- harmful inputs are checked before model execution

- model outputs are checked before user display

- agent tool calls are validated before execution

- high-risk or ambiguous cases can be routed to humans

- logs and review outcomes are used to improve thresholds

That is a realistic production posture.

Common mistakes

Mistake 1: only moderating inputs

Input checks are useful, but the model can still generate unsafe output. Moderate outputs before showing them to users.

Mistake 2: treating prompts as security boundaries

System prompts guide behavior, but they should not be the only security layer. Use tool permissions, structured workflows, and approvals.

Mistake 3: letting agents execute tools without validation

Always validate tool calls outside the model. The model should propose actions; your application should decide whether the action is allowed.

Mistake 4: blocking every uncertain case

Overly strict systems create user frustration. Send uncertain cases to review instead of always blocking them.

Mistake 5: keeping no logs

Without logs, you cannot tune thresholds, investigate incidents, or understand false positives.

Implementation checklist

- Moderate user inputs before model calls.

- Moderate model outputs before user display.

- Validate agent tool calls before execution.

- Use allow / review / block routing.

- Queue medium-risk content for review.

- Add user reporting and appeals where needed.

- Log risk levels, categories, actions, and reviewer overrides.

- Avoid storing raw sensitive content unless required.

- Test with real examples from your product.

- Revisit thresholds after production data arrives.

FAQ

Should I moderate chatbot inputs or outputs?

Both. Input moderation can reduce unsafe requests before they reach the model. Output moderation can catch unsafe generated content before users see it.

Does content moderation stop prompt injection?

No. Moderation can catch some unsafe or suspicious content, but prompt injection requires broader defenses such as tool validation, structured outputs, least privilege, human approvals, and workflow isolation.

Where should I place moderation in an AI agent?

Place moderation before the model for user input, before display for model output, and combine it with tool-call validation before any agent action is executed.

How does EvoLink help with chatbot moderation?

evolink_summary.risk_level, making it easier to route chatbot content into allow, review, or block workflows.Do I still need human review?

For production systems, yes. Human review is important for appeals, medium-risk cases, policy changes, and high-impact decisions.

Related moderation guides

- OpenAI Moderation API Pricing: Is It Free? Limits and Alternatives

- Image Moderation API Guide: How to Filter Unsafe User-Uploaded Images

- omni-moderation-latest Explained: OpenAI Moderation API Guide

- Best Content Moderation APIs and Tools for Developers