Image Moderation API Guide for User-Uploaded Images

The hard part is not calling the API. The hard part is designing the upload workflow around it.

TL;DR

- Use image moderation when public or semi-public users can upload avatars, posts, marketplace photos, AI-generated images, or message attachments.

- Run server-side moderation before images become publicly visible.

- Use temporary storage, then move approved images into permanent storage.

- Treat automated moderation as a first layer, not a perfect final decision.

- Add human review, user reporting, and appeals for ambiguous cases.

- EvoLink Moderation supports image-only and text-plus-image requests through an OpenAI-compatible

/v1/moderationsendpoint.

Why image moderation is different from text moderation

Text moderation and image moderation solve related but different problems.

Text moderation can inspect words, phrases, and language patterns. Image moderation needs to interpret pixels, objects, visual context, and sometimes text embedded in an image.

That creates several differences:

| Issue | Text moderation | Image moderation |

|---|---|---|

| Input size | Usually small | Often much larger |

| Context | Language and conversation | Visual scene, objects, OCR, captions |

| Common errors | sarcasm, coded language, context misses | art, medical images, swimwear, memes, crops, overlays |

| Workflow impact | comment rejected or queued | uploaded media may need temporary storage |

| User sensitivity | user can often rewrite | rejected profile photos or personal images can feel more sensitive |

This is why a reliable image moderation system usually combines an API with product rules, review queues, and user reporting.

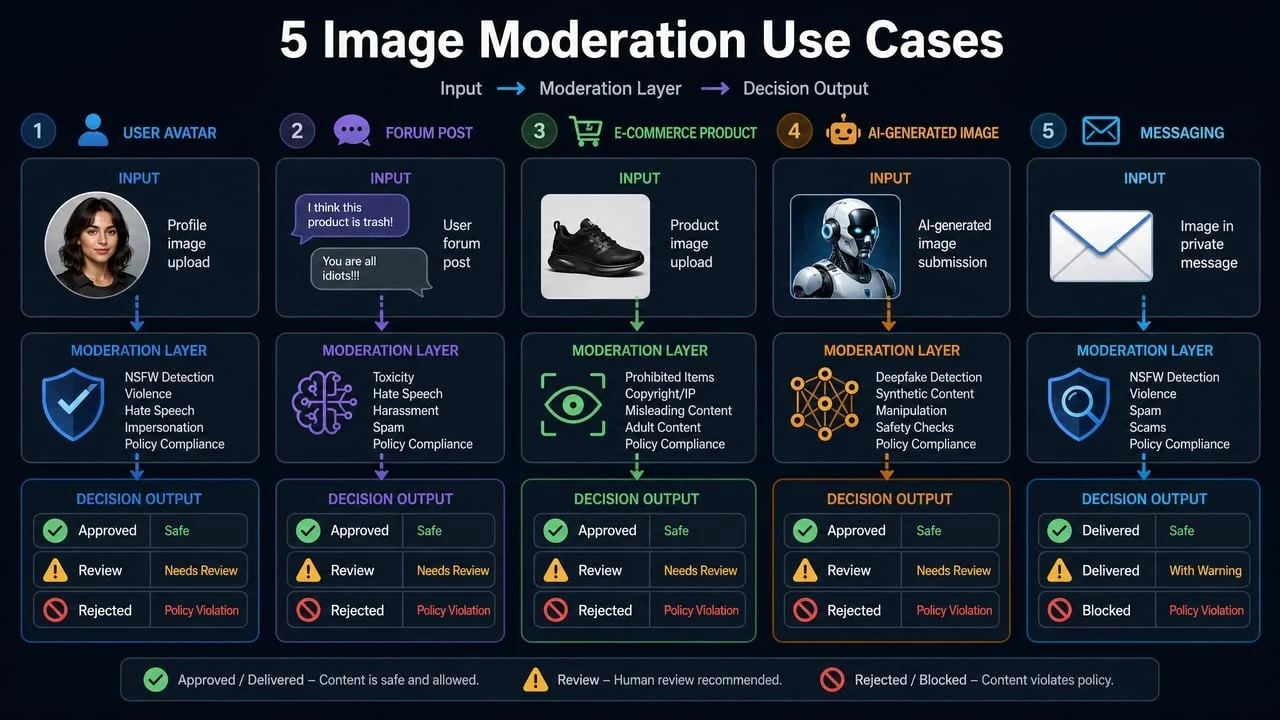

Common image moderation use cases

1. Profile pictures and avatars

Profile pictures are visible across the product. They are also easy to abuse.

Moderation checks often look for:

- sexual or explicit imagery

- graphic violence

- self-harm imagery

- hateful symbols

- threatening or intimidating visuals

For avatars, false positives matter. If a normal profile photo is rejected, the user experience can become frustrating quickly. A good pattern is to auto-block only high-risk images, send uncertain images to review, and allow clear low-risk images.

2. Forum and social media uploads

Forums and social platforms often combine text and images. A post caption can change the meaning of an image, and an image can change the meaning of a caption.

For these workflows, text-plus-image moderation is useful because the application can evaluate both parts of a submission before publication.

3. Marketplace product photos

Marketplace image moderation is not only about NSFW content. Product platforms may also need to detect:

- prohibited items

- weapons or drugs

- watermarks

- image quality problems

- misleading photos

- policy-sensitive visual claims

For image quality checks, OCR, watermark detection, or specialized marketplace rules, a dedicated visual moderation service such as Sightengine, Amazon Rekognition, or Azure AI Content Safety may be useful depending on the workflow.

4. AI-generated images

AI-generated images can still violate content policies. If users generate images inside your app or upload AI-generated assets, moderate both:

- the prompt or caption

- the generated image

This is especially important when outputs are public, monetized, or tied to a brand-safe experience.

5. Messaging attachments

Private or semi-private messages create a harder product decision because moderation intersects with privacy expectations. Some products scan uploads before delivery; others scan only reported content; some combine client-side warnings with server-side enforcement.

The right design depends on the product, legal obligations, privacy policy, and user expectations.

How image moderation APIs work

Most image moderation APIs follow the same basic pattern:

- You provide an image URL, uploaded file, storage object, or image bytes.

- The API analyzes the image with one or more moderation models.

- The response returns category labels, confidence scores, or boolean flags.

- Your application decides whether to allow, review, or block the image.

DetectModerationLabels API returns moderation labels with confidence scores and a hierarchical taxonomy. Sightengine's image moderation API returns detailed moderation scores for selected models. OpenAI's Moderation endpoint returns category flags and scores for text and images, with image support varying by category.Sources:

- OpenAI Moderation guide

- Amazon Rekognition DetectModerationLabels

- Sightengine image moderation principles

Recommended upload architecture

For most public applications, the safest pattern is:

user upload

-> temporary storage

-> image moderation API

-> allow / review / block

-> permanent storage only if allowed or review-approvedThis avoids making unsafe images public before they are checked.

Pattern 1: pre-upload client-side warning

Client-side checks can warn users quickly, but they should not be your only enforcement layer.

Use client-side checks for:

- instant user feedback

- reducing obvious abuse before upload

- saving bandwidth in low-risk flows

But always enforce policy server-side, because client-side checks can be bypassed.

Pattern 2: post-upload, pre-publication moderation

This is the default production pattern.

- Upload the image to temporary private storage.

- Send the image URL to a moderation API.

- If low risk, move it to permanent storage.

- If medium risk, queue it for review.

- If high risk, reject and delete the temporary file.

Pattern 3: asynchronous moderation

For trusted communities or high-volume workflows, you may store first and moderate in the background. If you do this, keep images private until approved or visible only to the uploader until checks finish.

Using EvoLink Moderation as an image moderation API

- text-only moderation

- image-only moderation

- text-plus-single-image moderation

/v1/moderations endpoint and returns standard moderation fields plus evolink_summary.The summary is designed for product decisions:

risk_levelflaggedviolationsmax_scoremax_category

Image-only example

from openai import OpenAI

client = OpenAI(

api_key="YOUR_EVOLINK_API_KEY",

base_url="https://direct.evolink.ai/v1"

)

response = client.moderations.create(

model="evolink-moderation-1.0",

input=[

{

"type": "image_url",

"image_url": {

"url": "https://example.com/user-upload.jpg"

}

}

]

)

summary = response.model_extra["evolink_summary"]

if summary["risk_level"] == "high":

block_image()

elif summary["risk_level"] == "medium":

send_to_review()

else:

approve_image()Text-plus-image example

response = client.moderations.create(

model="evolink-moderation-1.0",

input=[

{"type": "text", "text": "User caption or message"},

{

"type": "image_url",

"image_url": {

"url": "https://example.com/user-upload.jpg"

}

}

]

)Handling multiple images

EvoLink Moderation supports one image per request. If a user uploads multiple images, send concurrent requests or process them through a queue.

def decide_album_status(results):

if not results:

return "low"

risk_order = {"low": 0, "medium": 1, "high": 2}

highest = max(

results,

key=lambda r: risk_order[r.model_extra["evolink_summary"]["risk_level"]]

)

return highest.model_extra["evolink_summary"]["risk_level"]In many apps, one high-risk image should block the whole submission, while medium-risk images can send the submission to review.

Best practices for production image moderation

1. Moderate server-side

Client-side checks can improve UX, but the server must make the final decision. Otherwise, users can bypass the check by calling your upload API directly.

2. Use temporary storage

Do not immediately publish an uploaded image. Store it privately, moderate it, then move it to the public bucket or CDN only after approval.

3. Use tiered decisions

Avoid a simple binary branch. A three-tier workflow gives you more control:

- low risk: approve

- medium risk: review

- high risk: block

4. Give specific user feedback

Generic rejection messages create support load. When possible, explain the category at a high level:

- "This image appears to contain sexual content."

- "This image appears to contain graphic violence."

- "This image requires review before publication."

Avoid exposing exact thresholds or model weaknesses that could help users evade moderation.

5. Add reporting and appeals

No model is perfect. Add user reports and appeals so your team can correct false positives and catch false negatives.

6. Track moderation quality

Measure:

- rejection rate

- review queue rate

- reviewer override rate

- appeal success rate

- user report rate

- repeat offender rate

- latency and API error rate

These numbers help you tune thresholds and decide when to add another moderation layer.

7. Test with real content

Use anonymized or policy-safe samples from your own application. Public benchmark claims rarely predict how a moderation API will behave on your exact community, marketplace, or image style.

Common mistakes

Mistake 1: assuming one API catches everything

Image moderation APIs are useful, but they do not understand every product policy. You may still need custom rules for marketplace categories, brand safety, copyright, watermarks, or local compliance.

Mistake 2: publishing before moderation finishes

If unsafe images can appear even briefly, users may screenshot and share them. Keep uploads private until checks finish.

Mistake 3: blocking every uncertain image

Overly strict thresholds create false positives. Send uncertain content to review instead of rejecting everything automatically.

Mistake 4: ignoring text around the image

A caption can change an image's meaning. If your product allows captions, moderate text and image together.

Mistake 5: forgetting privacy and retention

Images may include faces, documents, private spaces, or minors. Review your vendor's data handling, retention, region, and training-use terms before production use.

When to use a specialized image moderation provider

EvoLink Moderation is a good fit for text and image safety decisions inside an OpenAI-compatible API workflow.

Consider a specialized image provider such as Sightengine, Amazon Rekognition, or Azure AI Content Safety when you need:

- OCR-heavy moderation

- watermark detection

- face detection

- deepfake detection

- video moderation

- live-stream moderation

- custom visual classes

- cloud-native integration with AWS or Azure

The right answer may be a layered stack: EvoLink for text-plus-image safety routing, plus a specialized visual service for image quality, OCR, or marketplace-specific checks.

FAQ

What is an image moderation API?

An image moderation API analyzes images and returns safety signals such as category labels, confidence scores, or risk levels. Applications use those signals to allow, review, or block uploaded images.

Does EvoLink Moderation support image moderation?

Yes. EvoLink Moderation 1.0 supports image-only requests and text-plus-single-image requests through an OpenAI-compatible moderation endpoint.

Can I moderate multiple images in one EvoLink request?

No. EvoLink Moderation supports one image per request. For multiple images, send concurrent requests or use a queue.

Should I moderate images before or after upload?

Use temporary upload storage first, moderate server-side, then move approved images into permanent storage. This keeps unsafe images from becoming public before checks finish.

Is image moderation enough for a marketplace?

Usually not by itself. Marketplaces often need safety checks plus quality rules, prohibited item checks, OCR, watermark detection, and human review.

Related moderation guides

- Best Content Moderation APIs and Tools for Developers

- omni-moderation-latest Explained: OpenAI Moderation API Guide

- OpenAI Moderation API Pricing: Is It Free? Limits and Alternatives

- How to Add Content Moderation to Your Chatbot or AI Agent