What China’s 2026 Spring Festival Gala Revealed About ByteDance’s AI Video Stack

The gala showed how ByteDance was positioning multiple AI layers together:

- video generation

- language and interaction

- live production infrastructure

Why The Gala Mattered

| Signal | Why it mattered |

|---|---|

| AI was visible in a mainstream national broadcast | It moved the conversation beyond lab demos |

| Multiple ByteDance AI surfaces appeared together | It suggested a coordinated product stack, not isolated experiments |

| The context was live, high-pressure, and public | It implied confidence in production-grade deployment |

| Massive verified reach | CMG reported 677 million all-media live reach; peak concurrent viewership exceeded 400 million |

Seedance 2.0: What It Represented

The most interesting Seedance angle was not just that AI-generated visuals appeared in a large broadcast.

That matters because Seedance 2.0 is easier to understand when you stop thinking about it as a novelty generator and start thinking about it as a tool for:

- visual direction

- structured motion

- scene shaping

- stylized spectacle at scale

The gala context reinforced exactly that positioning.

The standout AI moment was He Hua Shen (《贺花神》, "Ode to the Flower Deities"), a dance performance where AI-generated visuals of blooming flowers, flowing water, and seasonal transitions were seamlessly integrated with live performers. What made this technically significant:

- Close-up shots required pixel-perfect generation — any jitter or distortion would be visible to hundreds of millions of viewers

- Micro-changes like the slow blooming of a flower demanded precise temporal control over texture, layers, light, and shadow

- ByteDance described this as a shift from "AI-generated content" to "AI-directed content"

Doubao and The Broader ByteDance Stack

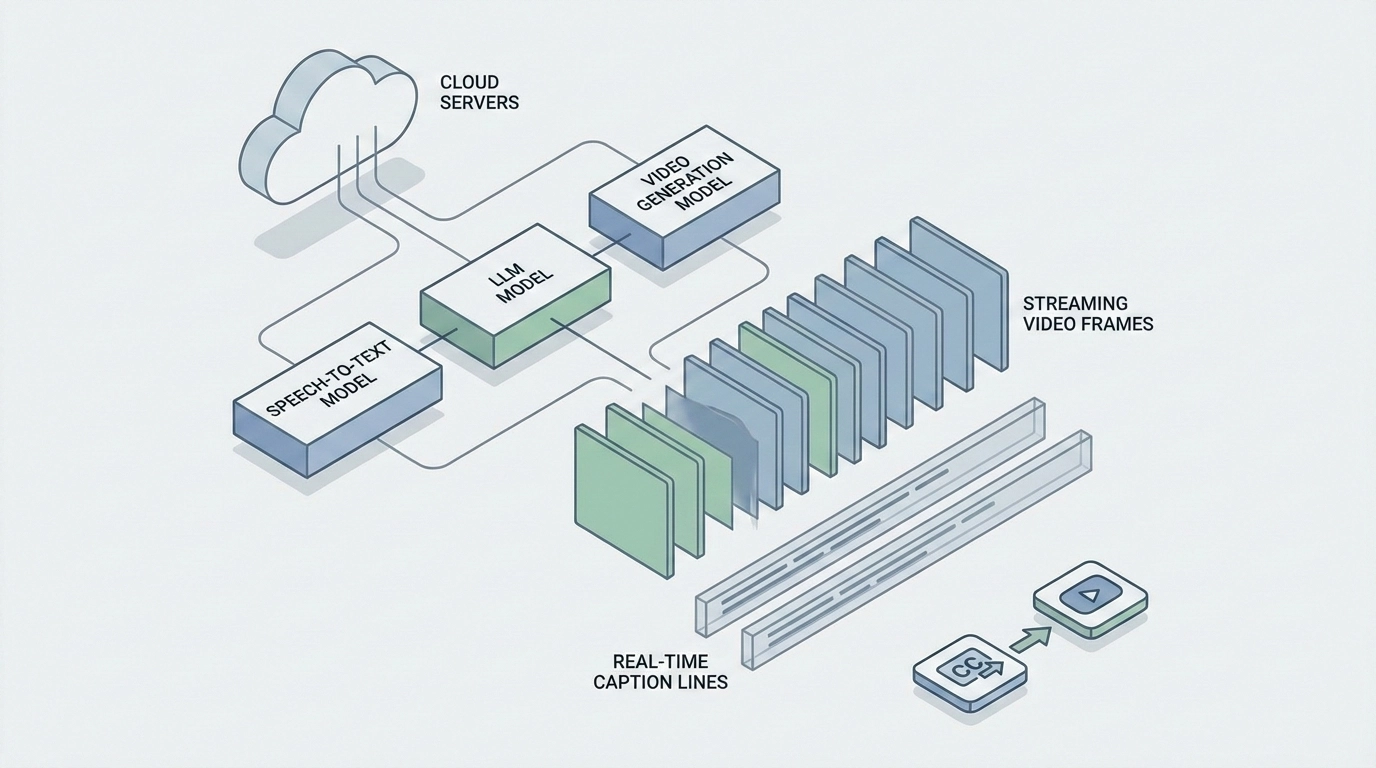

Seedance was only one layer.

| Layer | What the gala suggested |

|---|---|

| Video generation | Seedance-style visual creation and scene support |

| Language / interaction | Doubao-style reasoning and communication surfaces |

| Infrastructure | A cloud and production layer capable of supporting a public-scale event |

The scale of deployment was significant:

- 800 billion+ tokens processed daily across the Volcengine platform

- 1 million+ enterprises using Volcengine AI services across 100+ industries

That combination is strategically important. It suggests ByteDance is not only chasing isolated model quality. It is building an ecosystem where different AI components reinforce each other.

Why This Was Bigger Than A Single Performance

Events like the Spring Festival Gala matter because they change perception.

Before a public broadcast like this, many model discussions live inside:

- benchmarks

- social media demos

- developer circles

After a broadcast like this, the conversation becomes:

- can these systems support real production?

- can they handle pressure and scale?

- can AI-generated media become part of mainstream creative pipelines?

That is the shift this gala represented.

The Visible Layer: Robotics

The robots were the visible layer of a deeper AI stack. Four robotics companies delivered performances:

- Unitree G1 robots performed Kung Fu backflips

- Noetix Bumi robots performed a comedy sketch with veteran actress Cai Ming, featuring a lifelike bionic robot double with 32 facial motors

- MagicLab robots danced to "We Are Made in China"

- Galbot contributed additional robotic performances

Without Doubao’s language models, the robots couldn’t interact with human performers. Without Seedance, the visual spectacles wouldn’t exist. Without Volcengine’s infrastructure, the real-time interactions would have collapsed under the load.

What The Gala Suggested About ByteDance’s Strategy

The clearest strategic read is that ByteDance wants to be taken seriously across more than one AI category.

The event suggested a product story built around:

- media generation

- assistant-style intelligence

- infrastructure support

- high-visibility consumer and enterprise relevance

That is a much larger ambition than "we have a good video model."

Why Developers And Product Teams Should Care

Even if you never build for a gala-scale event, this matters because it hints at how ByteDance wants its AI stack to be perceived in the broader market.

For developers and product teams, the takeaway is not "copy the gala."

It is:

ByteDance is trying to position its AI products as parts of one stack, not as disconnected tools.

That affects how teams should interpret future model launches, ecosystem changes, and platform decisions from the company.

Chinese AI Models Available via API

Many of the models that powered or relate to the gala's technology are accessible via API:

| Model | Type | Gala Connection |

|---|---|---|

| Seedance | Video Generation | Powered Spring Festival Gala visual effects |

| Seedream | Image Generation | ByteDance's image model (updated alongside Seedance 2.0) |

| Kling | Video Generation | Kuaishou's leading text-to-video system |

| Wan 2.6 | Video Generation | Alibaba's video generation model |

| DeepSeek | Language Model | China's leading open-source reasoning model |

| Doubao | Language Model | The brain behind the gala's AI interactions |

Why This Matters On EvoLink

For EvoLink, the value of a story like this is not that it advertises ByteDance.

It is that it gives teams a reason to compare and route across model families from one place:

- ByteDance-family video routes

- other Chinese video models

- non-ByteDance alternatives

Final Take

The 2026 Spring Festival Gala was not just a cultural event with AI decoration.

It was a high-visibility demonstration of how ByteDance wants to frame its AI stack:

- Seedance for visual generation

- Doubao for intelligence and interaction

- a broader infrastructure layer for public-scale deployment

That is why the gala mattered. It turned ByteDance's AI story from a set of product pages into a visible ecosystem narrative.

FAQ

Why was Seedance 2.0 relevant to the gala story?

Because the event highlighted AI-generated visual spectacle, which fits Seedance 2.0's broader positioning as a video-generation layer inside ByteDance's stack.

Was the gala mainly a robot story?

No. The more important reading is that it showcased a broader AI stack working across media, interaction, and production infrastructure.

Why does Doubao matter in this context?

Because it represents the language and interaction layer in ByteDance's broader AI strategy.

What did the gala suggest about ByteDance's AI direction?

It suggested ByteDance wants to be seen as building an integrated AI ecosystem rather than isolated point models.

Why should developers care about a televised event like this?

Because large public events can reveal product ambition, positioning, and confidence in production readiness at a scale normal demos cannot.

Was this article meant to explain API access?

No. This article is about the event's market meaning, not about access or pricing.