How to Use GPT-5.4 in OpenClaw: Setup Guide (5 Minutes)

How to Use GPT-5.4 in OpenClaw: Setup Guide (5 Minutes)

gpt-5.4. OpenClaw merged GPT-5.4 support on March 6, 2026, so users on current builds may be able to use it natively. If your installed version predates that change, you can still use GPT-5.4 through a custom OpenAI-compatible provider such as EvoLink.If you tried GPT-5.4 with an older OpenClaw build and ran into model-selection errors, the problem is real, but the status changed quickly. GitHub Issue #36817 documented the failure on pre-merge builds, and OpenClaw merged GPT-5.4 support in PR #36590 on March 6, 2026.

This means the right guide is no longer "OpenClaw does not support GPT-5.4 yet." The accurate version is:

- If you are on a current build, check native support first.

- If you are on an older build or you want to route through a gateway, add a custom provider.

Why GPT-5.4 Matters for OpenClaw Users

OpenClaw is built for agent workflows, tool use, and long-context tasks. GPT-5.4 is relevant because OpenAI positioned it as a stronger model for those exact use cases.

Native Computer Use

OpenAI reports 75.0% on OSWorld-Verified, above the 72.4% human baseline. If your OpenClaw workflow involves browser actions, desktop interaction, or multi-step tool execution, this is one of GPT-5.4's most important upgrades.

1.05M Token Context Window

The official GPT-5.4 API model page lists a 1,050,000-token context window and 128,000 max output tokens. For large repositories, docs, and long agent runs, that is a practical upgrade over smaller-context models.

Better Tool Selection

OpenAI says GPT-5.4 can reduce tokens by 47% in a large-tool benchmark while maintaining the same accuracy. That matters for OpenClaw setups with many tools, MCP servers, or long tool descriptions.

Stronger Real-World Knowledge Work

OpenAI reports GPT-5.4 reaching 83.0% on GDPval versus 70.9% for GPT-5.2. GDPval is a knowledge-work benchmark, not a generic hallucination rate, so it is more accurate to describe this as a gain in high-value task performance rather than a direct hallucination metric.

The Actual OpenClaw Status on March 6, 2026

Here is the current state:

- GPT-5.4 support was merged into OpenClaw on March 6, 2026.

- Issue #36817 documented that some builds rejected

openai-codex/gpt-5.4as "not allowed." - Because the fix is already merged, it is no longer accurate to say OpenClaw broadly "doesn't support GPT-5.4 yet."

So before editing config files, do this first:

- Update OpenClaw if you are not on the latest build.

- Run

openclaw models list. - Select the GPT-5.4 model reference shown by your installation.

If GPT-5.4 appears in your model list, use native support and stop there.

When a Custom Provider Still Makes Sense

You may still want a custom provider if:

- your installed OpenClaw build predates the GPT-5.4 merge

- you want to route traffic through EvoLink or another OpenAI-compatible gateway

- you want one account or one bill across multiple upstream providers

How to Add EvoLink as a Custom Provider

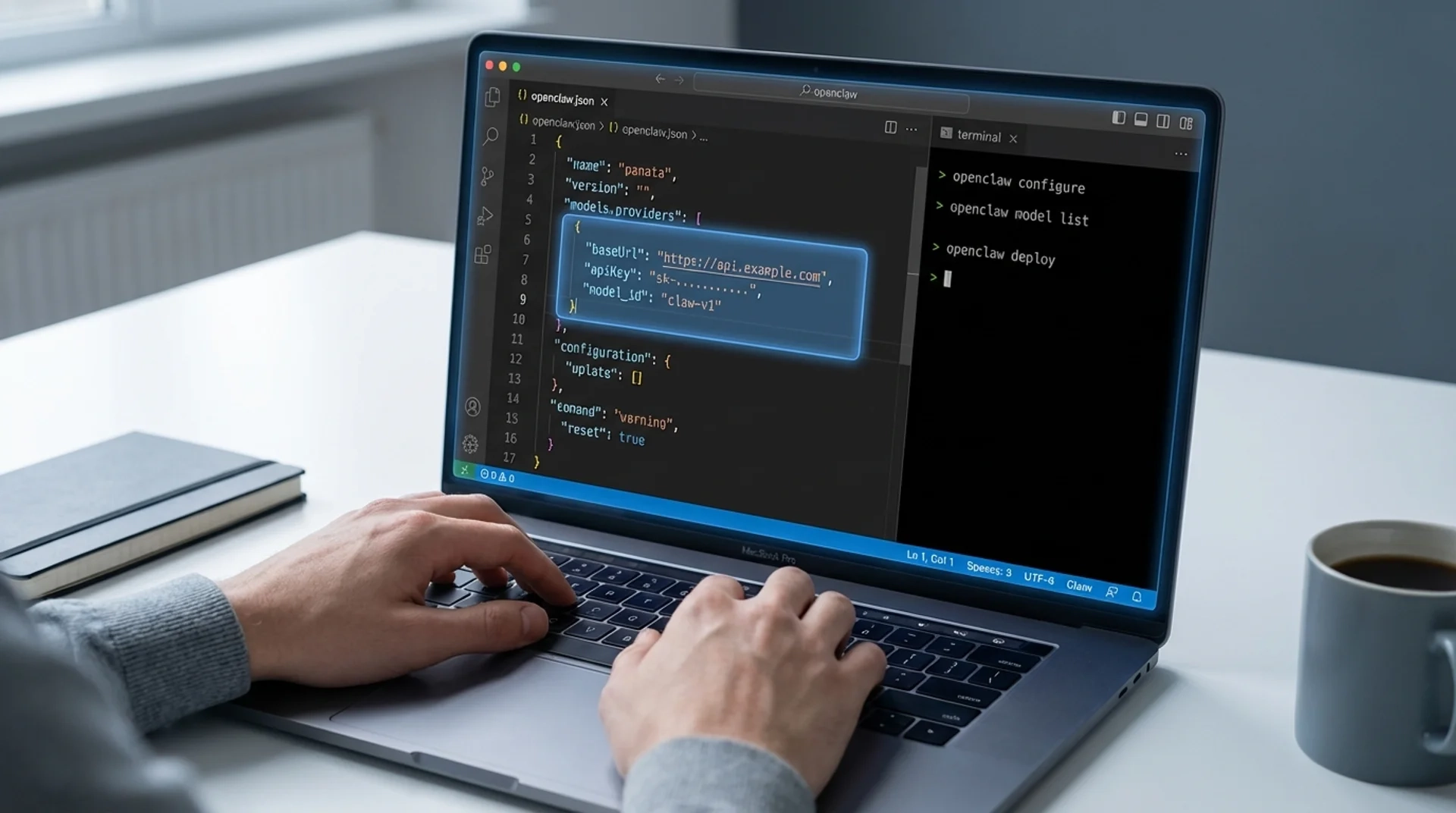

models.providers, not a top-level providers block.openclaw.json and add a provider using the values from your EvoLink dashboard or integration docs:{

"models": {

"mode": "merge",

"providers": {

"evolink-openai": {

"api": "openai-completions",

"baseUrl": "https://YOUR_EVOLINK_OPENAI_BASE_URL",

"apiKey": "${EVOLINK_API_KEY}",

"models": [

{

"id": "gpt-5.4",

"name": "GPT-5.4"

}

]

}

}

},

"agents": {

"defaults": {

"model": {

"primary": "evolink-openai/gpt-5.4"

}

}

}

}Important notes:

- Replace the placeholder base URL with the endpoint shown in your EvoLink account or docs.

- Use the exact model ID that your provider exposes.

- If your provider has not enabled GPT-5.4 for your account yet, the config will not work until rollout is complete.

Set the Model

After saving the file, run:

openclaw models list

openclaw models set evolink-openai/gpt-5.4

openclaw models statusopenclaw chat verification command, so it is better not to rely on that in setup instructions.Pricing: What Is Actually Confirmed

The pricing claims in the earlier draft were too aggressive and, in some cases, wrong.

Confirmed public pricing:

- OpenAI

gpt-5.4: $2.50 / 1M input, $0.25 / 1M cached input, $15.00 / 1M output - OpenAI

gpt-5.4-pro: $30.00 / 1M input, $180.00 / 1M output - OpenRouter

openai/gpt-5.4: $2.50 / 1M input, $15.00 / 1M output

gpt-5.4-beta model ID.

FAQ

1. Does OpenClaw officially support GPT-5.4?

Yes, support was merged on March 6, 2026. Older installed builds may still need a custom provider or an update.

2. Can I use GPT-5.4 through the OpenAI API today?

gpt-5.4 and gpt-5.4-pro in the API docs.3. What reasoning parameter should I use?

reasoning.effort, not reasoning_effort.4. Should I always use a custom provider?

No. Native support is simpler if your OpenClaw build already includes GPT-5.4. A custom provider is mainly useful for older builds or gateway routing.

5. Why do I get "model not allowed" error?

models.providers.6. How much does GPT-5.4 cost compared to other models?

GPT-5.4 costs $2.50/1M input and $15.00/1M output. That's the same as GPT-4 Turbo but with a larger context window (1.05M tokens vs 128K). GPT-5.4 Pro costs $30.00/1M input and $180.00/1M output.

7. What's the difference between gpt-5.4 and gpt-5.4-pro?

GPT-5.4 Pro offers higher performance on complex reasoning tasks and extended thinking time. Use standard GPT-5.4 for most agent workflows unless you need maximum reasoning capability.

8. How do I migrate from GPT-4 to GPT-5.4 in OpenClaw?

openclaw models set openai-codex/gpt-5.4. If using a custom provider, update the model ID in your openclaw.json config and restart the gateway.Wrapping Up

The practical answer is simple: GPT-5.4 is real, OpenClaw support has already landed, and the right setup path depends on your installed build.

openclaw models list, use native support. If it does not, add a custom provider under models.providers and use the exact endpoint and model ID exposed by your gateway.Try EvoLink for OpenClaw Routing

If you want to route GPT-5.4 through one unified API and keep the option to switch providers later, create an EvoLink account and verify the currently available model list before updating your OpenClaw config.

Sources Checked for This Revision

- OpenAI GPT-5.4 release announcement

- OpenAI GPT-5.4 and GPT-5.4 Pro API model pages

- OpenAI API pricing page

- OpenClaw docs for models and custom providers

- OpenClaw Issue #36817 and PR #36590

- OpenRouter

openai/gpt-5.4listing