How to Use GPT Image 2 with Seedance 2.0: Why Teams Pair Them for Storyboards and Short Videos

How to Use GPT Image 2 with Seedance 2.0

gpt-image-2. ByteDance and BytePlus publicly document Seedance 2.0 as a multimodal video model that supports text, image, audio, and video inputs. That makes the pairing easy to understand: gpt-image-2 is well suited to pre-production visual structure, while Seedance 2.0 is better suited to motion, timing, and audiovisual execution.TL;DR

- Use

gpt-image-2when you need character sheets, storyboard grids, keyframes, title cards, posters, or other structured visual assets. - Use Seedance 2.0 when you already know what the scene should look like and now need motion, camera behavior, and short-form video output.

- The pairing is usually stronger than forcing one model to do everything in a single prompt.

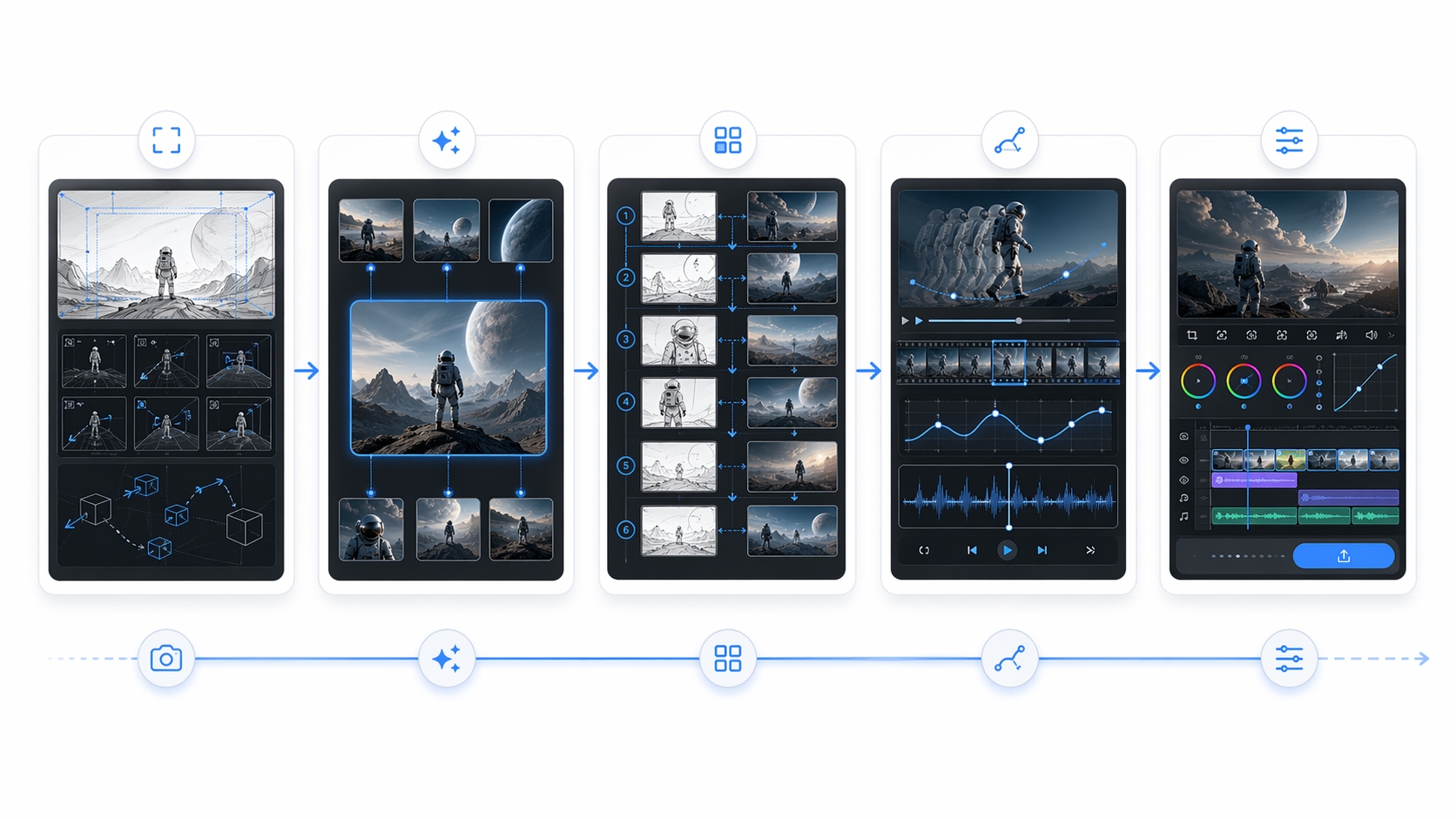

- The most common workflow is simple: define shots -> generate visual anchors -> build storyboard or keyframes -> animate in Seedance 2.0 -> finish titles and pacing in editing.

- This pairing fits trailers, teasers, visual narratives, product shorts, and social clips better than it fits pure talking-head or single-image tasks.

What Each Model Is Actually Good At

The cleanest way to think about this pairing is by production stage, not by hype cycle.

| Stage | GPT Image 2 (gpt-image-2) | Seedance 2.0 |

|---|---|---|

| Primary role | Pre-production visual design | Motion and short-form video generation |

| Best inputs | Text plus optional image references | Text, image, audio, and video inputs |

| Best outputs | Character sheets, storyboard pages, comic-style panels, posters, keyframes, title cards | Image-to-video, multimodal reference-to-video, edit-oriented video workflows |

| Best use | Locking visual structure and consistency | Adding timing, motion, camera direction, and audiovisual feel |

| Officially documented strengths | Fast, high-quality image generation and editing | Multimodal video generation with image, audio, and video references |

If the open question is:

- what the character should look like

- what the frame should contain

- how dense the visual information should be

- how a sequence should be laid out before animation

then GPT Image 2 is usually the better place to start.

If the open question is:

- how the scene should move

- how the camera should behave

- how the clip should progress from beat to beat

- how the sequence should feel over time

then Seedance 2.0 is usually the better tool.

Why Teams Pair Them Instead of Forcing One Model to Do Everything

1. Visual consistency gets decided earlier

Direct text-to-video can work well for short experiments, but it also has to solve too many things at once: character design, composition, motion, scene logic, pacing, and sometimes even audio. When teams move those early visual decisions into GPT Image 2 first, the later video stage has fewer chances to drift.

This matters most when the output is not just "a nice clip," but something with repeatable structure:

- a trailer

- a teaser

- a social ad

- a short sequence with recurring characters

- a stylized visual narrative

2. Story pacing becomes easier to control

Instead of asking a video model to invent the whole sequence, the workflow becomes:

- decide the shots

- show the shots visually

- animate the shots

That is usually easier to debug than one giant prompt.

3. Text and layout-heavy visuals survive better

OpenAI positions GPT Image 2 as a strong image generation and editing model, and the ChatGPT Images 2.0 launch materials heavily emphasize structured layouts, multilingual text rendering, comic pages, reference sheets, and editorial compositions. That makes it a better fit for assets like:

- title cards

- poster-style layouts

- comic or manga-style pages

- interface-like visuals

- branded or information-dense compositions

Those are exactly the kinds of assets that often break when you try to generate them directly inside the motion step.

The Workflow That Shows Up Most Often

The pairing usually falls into one of two patterns.

| Workflow | Start in GPT Image 2 | Finish in Seedance 2.0 | Best fit |

|---|---|---|---|

| Storyboard-first | 3x3 storyboard grid or multi-panel story page | Animate from the storyboard as image-to-video or reference-driven video | Trailers, teasers, short narrative clips |

| Keyframe-first | Character sheet, style anchor, 4-6 keyframes, title cards | Animate each visual as one clip or sequence | Product shorts, character PVs, social edits, stylized ads |

A Practical Lightweight Process

You do not need a huge pipeline to make this useful. For most teams, a five-step workflow is enough.

1. Define shot intent first

Before prompting either model, write a short shot list:

Goal: 15-second teaser

Shot 1: establish subject and mood

Shot 2: close-up detail introduces tension

Shot 3: world or product context expands

Shot 4: movement or conflict appears

Shot 5: final reveal or title holdThat is enough. The goal is not prompt poetry. The goal is to decide what the clip needs to say.

2. Use GPT Image 2 to lock character and style anchors

Create one or two visual anchors before you attempt a sequence:

- a character sheet or product visual anchor

- a style anchor for color, lighting, and materials

If these are unstable, the later motion stage usually gets worse, not better.

3. Build a storyboard grid or keyframe set

Choose the lighter structure that matches your workload:

- storyboard grid if you want one image that carries the whole sequence

- keyframe set if you want more shot-level control

4. Move into Seedance 2.0 for motion

480p and 720p outputs, and durations from 4 to 15 seconds. That makes it a good second-stage tool when the visual design is already decided.At this stage, write prompts more like direction notes than image tags. Focus on:

- what moves

- how the camera moves

- when the beat changes

- what the audio atmosphere should feel like

5. Finish titles and pacing outside the motion step

Even when the video model is strong, it is usually safer to finalize:

- title treatment

- subtitles

- pacing trims

- end cards

- final packaging

in editing, rather than asking the generation step to do every job at once.

Common Failure Points

The storyboard grid appears as the literal opening frame

This is a common side effect of storyboard-first workflows. The easiest fix is either to trim the first second in editing or to make the opening panels visually closer together so the transition feels less abrupt.

Character drift starts before the video stage

This often looks like a Seedance problem, but the root cause is usually earlier. If the character sheet or keyframe set is not stable, the motion step inherits that instability. The fix is usually to strengthen the image anchors, not to reroll the video step endlessly.

Titles and logos break in motion

Text is still a fragile part of video generation. If a title or logo matters, generate it separately as a static asset first, then animate it lightly or place it in editing.

When This Pairing Fits Best

This workflow is not universal. It is best when you have a real pre-production stage, even if it is a lightweight one.

| Strong fit | Weak fit |

|---|---|

| Trailers and teasers | Single-image tasks |

| Short-form visual narratives | Pure talking-head generation |

| Social ads with shot structure | Fast one-off prompt experiments |

| Product videos that need layout planning | Workloads with no need for visual consistency |

| Character-led or style-led shorts | Cases where direct text-to-video already solves the problem cleanly |

If your main job is "generate one image," just use GPT Image 2.

If your main job is "generate one fast video clip from one prompt," you may not need the extra structure.

What This Means on EvoLink

The EvoLink angle here is not that the platform invented this workflow. It is that the workflow becomes easier to operate when image and video routes can live inside the same working surface.

- keep the image stage and video stage in the same model workflow

- compare route behavior without rebuilding your stack

- decide when to stay in one model family and when to hand off to another

FAQ

Is ChatGPT Images 2.0 the same as gpt-image-2?

gpt-image-2 is the documented API model name.Why not just generate the whole video directly?

You can, and sometimes that is the faster choice. The paired workflow becomes useful when your team needs more control over character consistency, shot order, or structured visual planning.

Should I start with storyboard grids or with keyframes?

What is the main job for GPT Image 2 in this workflow?

Its main job is to create usable pre-production visuals: character sheets, visual anchors, storyboard pages, keyframes, title cards, and other structured image assets.

What is the main job for Seedance 2.0 in this workflow?

Its main job is to turn those visual assets into motion-oriented outputs through image-to-video or multimodal reference workflows, with clearer camera and timing control than a pure still-image model can provide.

Should I generate titles and logos inside the video step?

Usually no. If readability matters, it is safer to create those assets separately and add or animate them later.

When does this pairing fit badly?

It is usually overkill for single still images, simple direct video prompts, or workloads where consistency across shots does not matter much.

Sources

- OpenAI, "Introducing ChatGPT Images 2.0" (April 21, 2026): https://openai.com/index/introducing-chatgpt-images-2-0/

- OpenAI API model page for

gpt-image-2: https://developers.openai.com/api/docs/models/gpt-image-2 - ByteDance Seedance 2.0 official page: https://seed.bytedance.com/en/seedance2_0

- BytePlus ModelArk Seedance 2.0 series tutorial: https://docs.byteplus.com/api/docs/ModelArk/2291680