DeepSeek V4 Flash & Pro vs GPT-5.4 vs Claude Opus 4.6: Official Pricing and Capability Comparison

This article was originally published before DeepSeek V4 was publicly documented. It has now been fully revised to reflect the official DeepSeek V4 preview launch, including public model IDs, pricing, 1M context, and the split between Flash and Pro.

4.6 comparison set. Treat this page as a historical and still-useful comparison baseline rather than the final word on the latest Claude release.deepseek-v4-flash and deepseek-v4-pro, and the official pricing page now publishes pricing, 1M context, and 384K max output for both. DeepSeek API Docs DeepSeek Models & PricingThat means the comparison has changed from "can we verify DeepSeek V4 at all?" to a more useful decision:

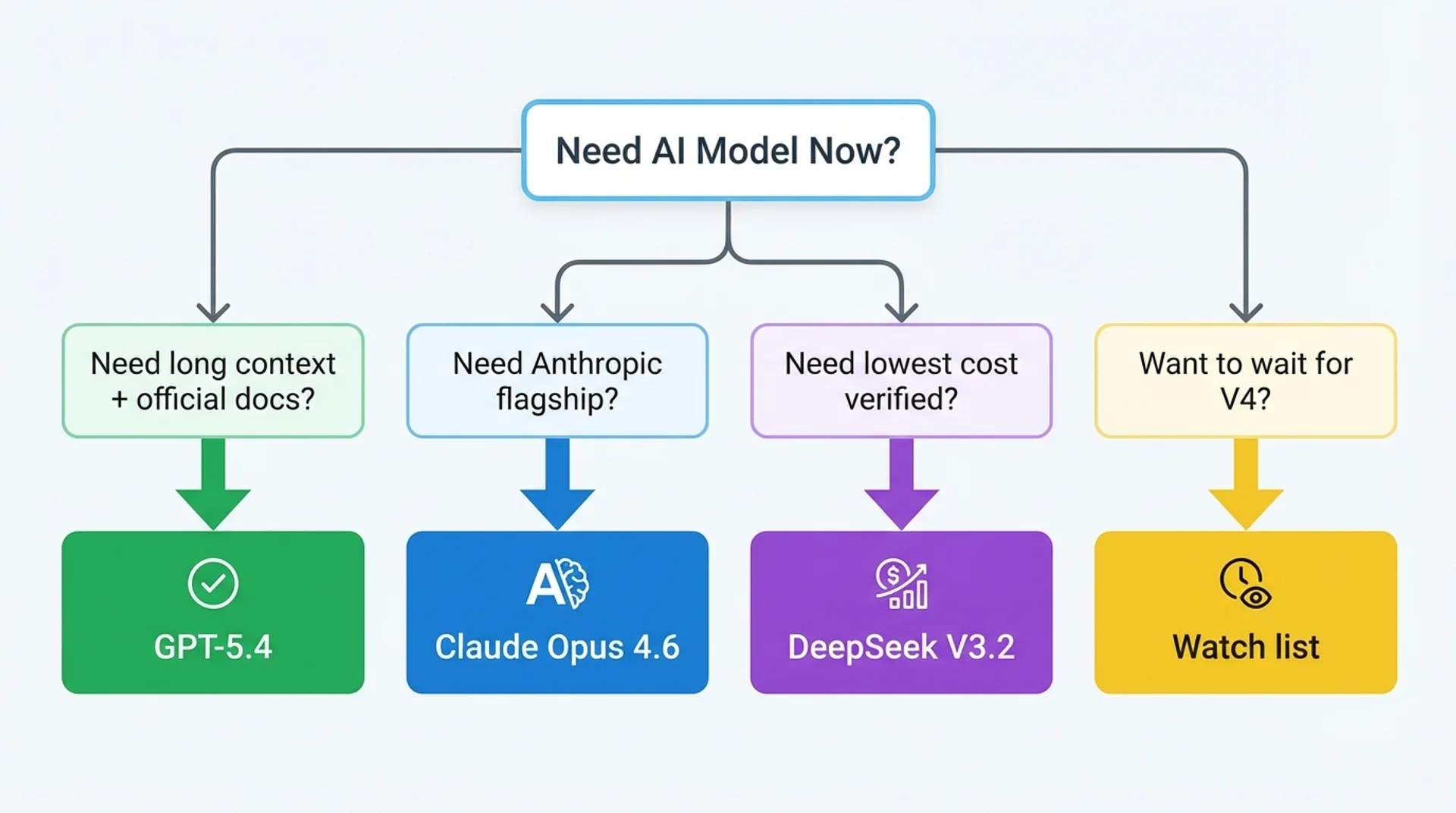

- When is DeepSeek V4 Flash the better fit?

- When is DeepSeek V4 Pro worth paying for?

- When should teams still choose GPT-5.4 or Claude Opus 4.6 instead?

TL;DR

- DeepSeek V4 Flash is now the cheapest officially documented option in this comparison set at $0.14 input / $0.28 output per 1M tokens, with 1M context and 384K max output. It is the strongest candidate for high-volume coding, agent routing, and cost-sensitive long-context workloads. DeepSeek Models & Pricing

- DeepSeek V4 Pro is the higher-intelligence V4 route at $1.74 input / $3.48 output per 1M tokens. It is still materially cheaper than Claude Opus 4.6 and cheaper on output than GPT-5.4, while keeping the same 1M context and 384K max output. DeepSeek Models & Pricing

- GPT-5.4 remains the clearest officially documented OpenAI option for complex professional work, with 1,050,000 context, 128,000 max output, and $2.50 / $15.00 pricing. OpenAI Pricing OpenAI GPT-5.4 Model

- Claude Opus 4.6 remains a top-tier choice for coding and agentic tasks, with pricing at $5 / $25 per 1M tokens, 128K output, and 1M context in beta on the Claude Developer Platform. Anthropic Claude Opus 4.6

What is officially verifiable now

The table below uses only currently documented official vendor information.

| Topic | DeepSeek V4 Flash | DeepSeek V4 Pro | GPT-5.4 | Claude Opus 4.6 |

|---|---|---|---|---|

| Provider | DeepSeek | DeepSeek | OpenAI | Anthropic |

| Official public status | Public API preview documented | Public API preview documented | Officially documented and available | Officially documented and available |

| Input pricing | $0.14 / 1M cache miss | $1.74 / 1M cache miss | $2.50 / 1M | $5.00 / 1M |

| Cached input pricing | $0.028 / 1M | $0.145 / 1M | $0.25 / 1M | Pricing varies by caching and long-context tiers |

| Output pricing | $0.28 / 1M | $3.48 / 1M | $15.00 / 1M | $25.00 / 1M |

| Context window | 1M | 1M | 1,050,000 | 1M in beta |

| Max output | 384K | 384K | 128K | 128K |

| Thinking mode | Supported | Supported | Supported via reasoning effort | Supported via adaptive / extended thinking |

| Tool calls | Supported | Supported | Supported | Supported |

| Practical status | Best low-cost V4 route | Best higher-intelligence V4 route | Official OpenAI flagship route | Official Anthropic flagship route |

Pricing reality check

The pricing story is now straightforward because all three vendors publish usable official numbers.

| Model | Input | Cached input | Output | Practical pricing takeaway |

|---|---|---|---|---|

| DeepSeek V4 Flash | $0.14 | $0.028 | $0.28 | Cheapest official route here by a wide margin |

| DeepSeek V4 Pro | $1.74 | $0.145 | $3.48 | Still much cheaper than Claude Opus 4.6 and cheaper than GPT-5.4 on output |

| GPT-5.4 | $2.50 | $0.25 | $15.00 | Premium OpenAI route for complex professional work |

| Claude Opus 4.6 | $5.00 | context-tier dependent | $25.00 | Highest-cost route here, but still a top coding and agent model |

Two things stand out immediately:

- DeepSeek V4 Flash is the budget winner by a large margin.

- DeepSeek V4 Pro has moved from "unverified watchlist" to a real premium-but-still-cost-efficient option.

If cost per output token matters to your workload, the gap is especially important:

- DeepSeek V4 Flash output is far cheaper than GPT-5.4 and Claude Opus 4.6

- DeepSeek V4 Pro output is still well below GPT-5.4 and Claude Opus 4.6

The biggest practical change: DeepSeek V4 is now Flash vs Pro

Earlier DeepSeek V4 writeups treated V4 like one hypothetical model. That is no longer accurate for decision-making.

Today the more useful framing is:

- Choose Flash when cost, throughput, and broad deployment matter most

- Choose Pro when you want stronger reasoning quality but still want to stay below closed-model pricing

That makes DeepSeek V4 less like a single competitor to GPT-5.4 or Opus 4.6 and more like a two-tier product family.

Which model fits which workflow

Choose DeepSeek V4 Flash if you want the best cost-performance tradeoff

Flash is the strongest fit when you need:

- high-volume coding assistance

- cost-sensitive agent pipelines

- large-context document or repository ingestion

- routing defaults that must stay cheap

Choose DeepSeek V4 Pro if you want a premium V4 route without closed-model pricing

Pro is the stronger fit when you need:

- deeper reasoning than a budget route

- more difficult coding and analysis tasks

- longer-form structured output

- a step up from Flash without jumping all the way to Claude Opus 4.6 pricing

For many teams, Pro is not a universal replacement for GPT-5.4 or Opus 4.6. It is a lower-cost premium option worth evaluating side by side.

Choose GPT-5.4 if you want OpenAI's officially documented flagship route

GPT-5.4 remains attractive when you want:

- official OpenAI platform support

- a documented 1,050,000 context window

- 128,000 max output

- a familiar OpenAI developer workflow

Choose Claude Opus 4.6 if your top priority is frontier Anthropic coding and agent work

Claude Opus 4.6 remains strong when you want:

- Anthropic's flagship coding model

- extended and adaptive thinking controls

- long-running agentic workflows

- Claude Platform features such as context compaction and 1M context beta

Context and output limits matter more than before

The DeepSeek V4 update also changes the long-context conversation.

Previously, one practical reason to choose GPT-5.4 or Claude Opus 4.6 was that DeepSeek V4 was not publicly documented. Now DeepSeek is officially in the same planning conversation because both Flash and Pro expose:

- 1M context

- 384K max output

That matters for:

- repo-scale code understanding

- long legal or research documents

- long multi-step agent loops

- tasks that need much larger single-response outputs

On pure max-output headroom, DeepSeek V4 now clearly beats GPT-5.4 and Claude Opus 4.6 based on current official docs.

Recommended decision by use case

| Use case | Best fit | Why |

|---|---|---|

| Need the lowest-cost official long-context route | DeepSeek V4 Flash | Cheapest official pricing here with 1M context and 384K output |

| Need a stronger premium DeepSeek route | DeepSeek V4 Pro | Higher-end V4 option without GPT / Claude-level pricing |

| Need an official OpenAI flagship model | GPT-5.4 | OpenAI-documented flagship with 1,050,000 context and 128K output |

| Need Anthropic's top coding and agent model | Claude Opus 4.6 | Strong Anthropic flagship with 1M beta context and 128K output |

| Need one model to evaluate for broad production routing | Start with Flash, then test Pro | Flash covers cost-sensitive routing; Pro covers harder workloads |

What changed from the old March 2026 conclusion

The old conclusion for this topic was:

- DeepSeek V4 was still a watchlist item

- V3.2 was the practical official DeepSeek baseline

- teams should not model budgets around V4 yet

That is no longer the correct conclusion.

- DeepSeek V4 is officially documented and usable in preview

- DeepSeek V4 Flash and Pro should now be evaluated directly

- V3.2 is no longer the right planning baseline for V4-focused comparisons

FAQ

1. Is DeepSeek V4 officially available now?

deepseek-v4-flash and deepseek-v4-pro, and Reuters reported on April 24, 2026 that DeepSeek launched preview versions of V4. DeepSeek API Docs Reuters via Investing.com2. Can I now compare DeepSeek V4 pricing with GPT-5.4 and Claude Opus 4.6 responsibly?

3. Which DeepSeek V4 variant is the better first test: Flash or Pro?

For most teams, Flash is the better first test because it is dramatically cheaper while keeping 1M context and 384K max output. If your workloads are harder reasoning or coding tasks, then test Pro next.

4. Does GPT-5.4 still have an advantage?

5. Does Claude Opus 4.6 still have an advantage?

6. What is the cheapest officially documented option in this comparison?

7. Should I still use DeepSeek-V3.2 as my budget baseline?

Not for V4 planning. If your topic is specifically DeepSeek V4, the better baseline is now V4 Flash and V4 Pro, because those are the models officially documented today.

8. Where should I go if I want route details and implementation guidance?

Sources

- DeepSeek API Docs

- DeepSeek Models & Pricing

- OpenAI API Pricing

- OpenAI GPT-5.4 Model

- Anthropic Claude Opus 4.6

- Anthropic Claude Opus 4.7

- China's AI darling DeepSeek previews new model | Reuters via Investing.com