Seedance 2.0 vs Kling 3.0 vs Sora 2: Which Video API Fits Your Workflow?

It is:

Which one fits the way my team actually produces video?

Quick Fit Table

| Model | Best fit | Main strength | Main tradeoff |

|---|---|---|---|

| Seedance 2.0 | Control-heavy creative teams | Reference-driven direction and structured generation | Higher operator complexity |

| Kling 3.0 | Short-form production teams | Practical repeat generation and strong motion fit | Less differentiated creative control |

| Sora 2 | Premium realism-first teams | Stronger realism and cleaner premium baseline | Less reference-oriented control than Seedance |

Feature Comparison

| Feature | Seedance 2.0 | Kling 3.0 | Sora 2 |

|---|---|---|---|

| Duration | Up to 15s | 3-15s | 4/8/12s |

| Main workflow style | Reference-heavy | Production-friendly short video | Officially documented video API |

| Documented modes | T2V, I2V, V2V, reference-to-video | T2V, I2V | T2V, I2V |

Seedance 2.0: Best for Directed Creative Control

- references

- camera intent

- structured sequences

- stronger creative shaping

Seedance 2.0 is the most interesting of the three when the operator already knows what they want and wants the model to follow that direction more closely.

Why teams choose Seedance 2.0

| Reason | Why it matters |

|---|---|

| Reference-heavy workflow | Better fit when prompts alone are not enough |

| More directed camera behavior | Useful for stylized hero shots and structured sequences |

| Stronger audio-aware identity | Audio feels more central to the model's positioning |

| Higher operator upside | Skilled users can shape output more aggressively |

When Seedance 2.0 is the best fit

- brand or studio teams that work from references

- music, motion, or highly directed short-form creative

- teams that care about control more than simplicity

Kling 3.0: Best for Practical Short-Form Production

- repeatable short-form output

- social or e-commerce content

- operator efficiency

- high production volume

Kling 3.0 is easier to justify when you need a workhorse model instead of a more specialized creative instrument.

Why teams choose Kling 3.0

| Reason | Why it matters |

|---|---|

| Better throughput story | Easier fit for batch generation |

| Strong motion handling | Useful for people, movement, and action-driven scenes |

| Practical short-form orientation | Good match for repeatable 3-15 second work |

| Lower-friction operator experience | Easier than a reference-heavy creative workflow |

When Kling 3.0 is the best fit

- social-video pipelines

- creator or e-commerce teams

- teams that need a safer high-volume route

Kling 3.0 Pricing Reference

EvoLink lists Kling 3.0 with:

- text-to-video and image-to-video

- 3-15 second generation

- 720p and 1080p output

- pricing from

$0.075/s

Sora 2: Best for Realism and Premium Visual Baselines

- realism

- premium product visuals

- physics-sensitive scenes

- cleaner premium output without as much stylized push

Sora 2 is the stronger answer when the question is less about deep reference direction and more about a convincing premium baseline.

Why teams choose Sora 2

| Reason | Why it matters |

|---|---|

| Better realism orientation | Safer for physically grounded scenes |

| Stronger premium baseline | Useful for higher-end demo or marketing footage |

| Better subtlety in natural rendering | Helpful when close-up realism matters |

| Cleaner vendor trail | Easier to justify in more formal procurement environments |

When Sora 2 is the best fit

- premium marketing clips

- product demos

- realism-first creative work

- teams that want a safer realism-oriented default

Sora 2 Pricing Reference

OpenAI publishes:

- the video endpoint:

POST /v1/videos - supported model names including

sora-2andsora-2-pro

| Model | Official OpenAI pricing | Duration presets |

|---|---|---|

sora-2 | $0.10/s | 4s, 8s, 12s |

sora-2-pro | $0.30/s or $0.50/s depending on size | 4s, 8s, 12s |

$0.08/s.Decision Matrix

| If your team cares most about... | Start with |

|---|---|

| Reference control | Seedance 2.0 |

| Fast short-form throughput | Kling 3.0 |

| Realism and premium polish | Sora 2 |

| Motion-heavy social content | Kling 3.0 |

| Stylized cinematic direction | Seedance 2.0 |

| Physics-sensitive scenes | Sora 2 |

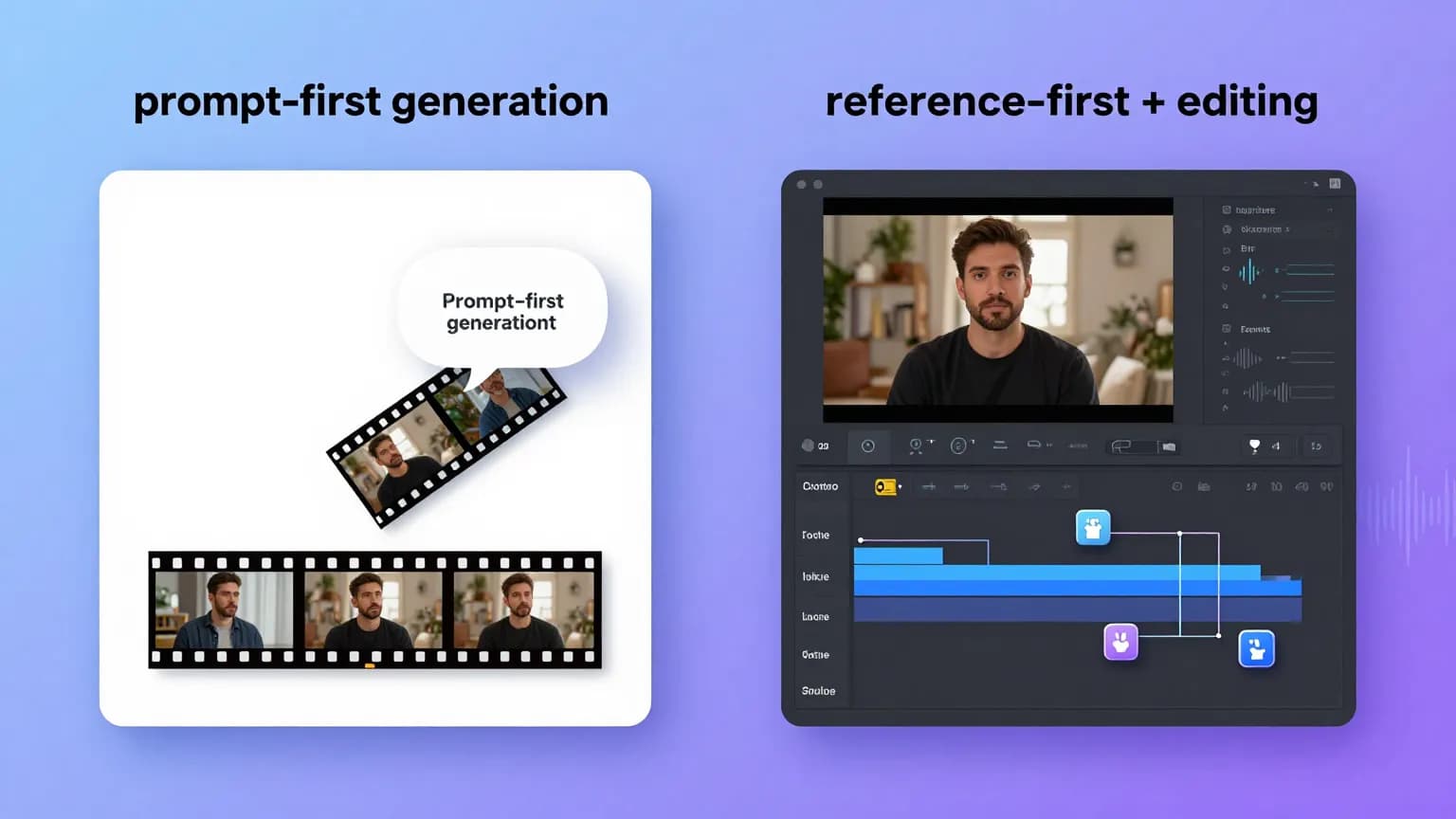

The Cleanest Way To Think About The Split

The simplest read is:

- Seedance 2.0 is the control-first option

- Kling 3.0 is the production-first option

- Sora 2 is the realism-first option

That framing is more useful than trying to force a universal winner across every workflow.

How To Route Them On EvoLink

This is where the comparison becomes useful for EvoLink instead of turning into a generic model essay.

Inside EvoLink, you can treat the split as an operating rule:

- Seedance 2.0 for reference-heavy and camera-directed creative work

- Kling 3.0 for repeatable short-form production

- Sora 2 for realism-first premium scenes

That is the product value behind the comparison: one integration layer, different model choices by workload.

FAQ

Which model is best overall?

There is no universal winner. The better choice depends on whether your workflow is control-first, production-first, or realism-first.

Which model is best for reference-heavy creative work?

Which model is best for short-form social or e-commerce production?

$0.075/s on EvoLink) make it easier to use in repeatable social, e-commerce, and batch content pipelines.Which model is cheapest today?

$0.075/s on EvoLink. OpenAI's official listed base price for Sora 2 is $0.10/s.